Learning to Inference Adaptively for Multimodal Large Language Models

Zhuoyan Xu, Khoi Duc Nguyen, Preeti Mukherjee, Saurabh Bagchi, Somali Chaterji, Yingyu Liang, Yin Li

2025-03-19

Summary

This paper is about making AI models that understand both images and text run faster and more efficiently, especially when computer resources are limited.

What's the problem?

AI models can be slow and require a lot of computing power, making them difficult to use on devices with limited resources.

What's the solution?

The researchers created a system called AdaLLaVA that can change how the AI model works during use, depending on how much computing power is available and what kind of input it's receiving.

Why it matters?

This work is important because it allows AI models to be used in more places and on more devices, even when resources are limited.

Abstract

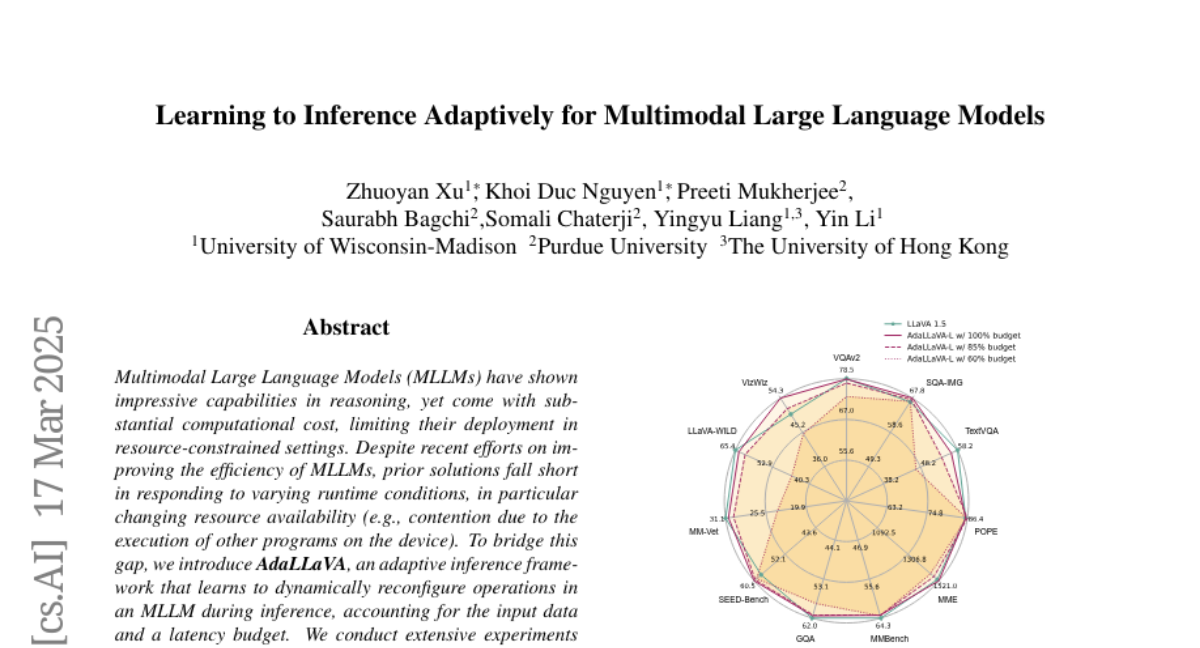

Multimodal Large Language Models (MLLMs) have shown impressive capabilities in reasoning, yet come with substantial computational cost, limiting their deployment in resource-constrained settings. Despite recent efforts on improving the efficiency of MLLMs, prior solutions fall short in responding to varying runtime conditions, in particular changing resource availability (e.g., contention due to the execution of other programs on the device). To bridge this gap, we introduce AdaLLaVA, an adaptive inference framework that learns to dynamically reconfigure operations in an MLLM during inference, accounting for the input data and a latency budget. We conduct extensive experiments across benchmarks involving question-answering, reasoning, and hallucination. Our results show that AdaLLaVA effectively adheres to input latency budget, achieving varying accuracy and latency tradeoffs at runtime. Further, we demonstrate that AdaLLaVA adapts to both input latency and content, can be integrated with token selection for enhanced efficiency, and generalizes across MLLMs. Our project webpage with code release is at https://zhuoyan-xu.github.io/ada-llava/.