Learning to Reason under Off-Policy Guidance

Jianhao Yan, Yafu Li, Zican Hu, Zhi Wang, Ganqu Cui, Xiaoye Qu, Yu Cheng, Yue Zhang

2025-04-22

Summary

This paper talks about LUFFY, a new method that helps AI models get better at reasoning and solving problems by learning from examples and also exploring new solutions, even when they haven’t been directly trained using reinforcement learning.

What's the problem?

The problem is that many AI models struggle to think through new or tricky situations if they haven’t been trained specifically for those cases, especially when they don’t get feedback from trial and error like in reinforcement learning. This limits their ability to generalize and solve problems they haven’t seen before.

What's the solution?

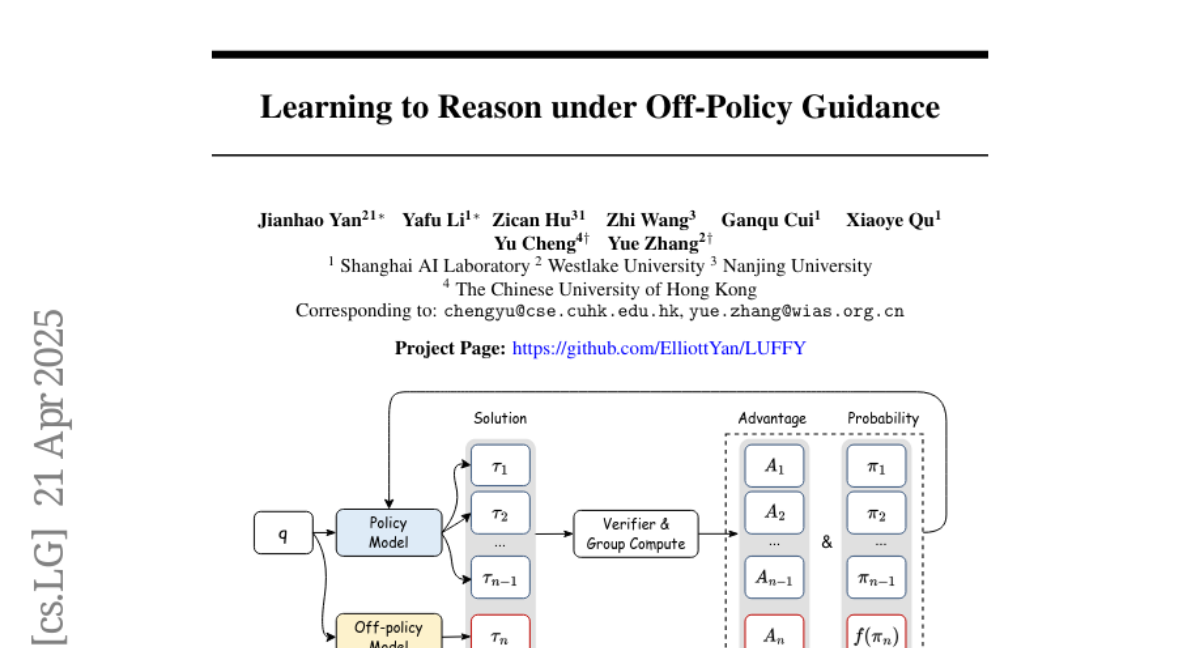

The researchers created LUFFY, which gives guidance to these models using examples from outside their own training, helping them imitate good solutions while also encouraging them to try out new ideas. This balance between copying and exploring helps the models get better at reasoning and handling unfamiliar problems.

Why it matters?

This matters because it means AI can become more flexible and reliable in the real world, solving a wider range of problems without needing tons of extra training or feedback, which is important for making smarter and more useful technology.

Abstract

LUFFY enhances zero-RL models with off-policy guidance, improving reasoning and generalization through balanced imitation and exploration.