LEMMA: Learning from Errors for MatheMatical Advancement in LLMs

Zhuoshi Pan, Yu Li, Honglin Lin, Qizhi Pei, Zinan Tang, Wei Wu, Chenlin Ming, H. Vicky Zhao, Conghui He, Lijun Wu

2025-03-25

Summary

This paper is about making AI better at math by teaching it to learn from its mistakes.

What's the problem?

AI models that solve math problems usually only learn from correct examples, ignoring the valuable information contained in incorrect solutions.

What's the solution?

The researchers developed a new method called LEMMA that trains AI models on both correct and incorrect solutions, helping them understand where they went wrong and how to fix their errors.

Why it matters?

This work matters because it can lead to AI models that are better at solving complex math problems and more capable of learning from their own mistakes.

Abstract

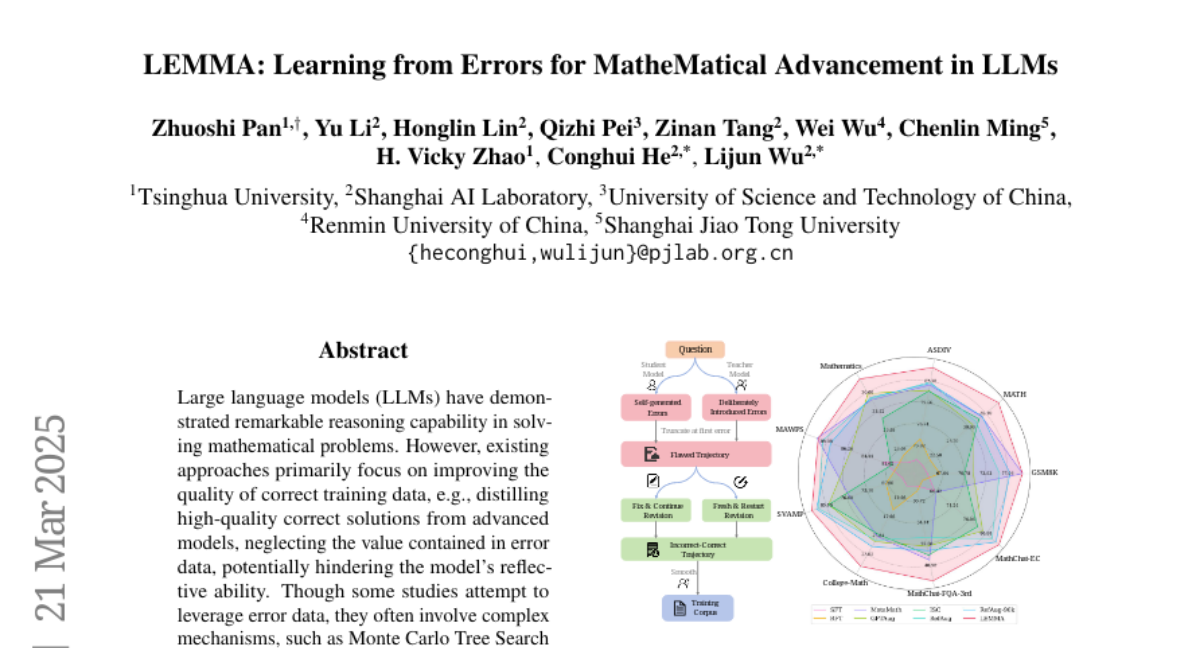

Large language models (LLMs) have demonstrated remarkable reasoning capability in solving mathematical problems. However, existing approaches primarily focus on improving the quality of correct training data, e.g., distilling high-quality correct solutions from advanced models, neglecting the value contained in error data, potentially hindering the model's reflective ability. Though some studies attempt to leverage error data, they often involve complex mechanisms, such as Monte Carlo Tree Search (MCTS) to explore error nodes. In this work, we propose to enhance LLMs' reasoning ability by Learning from Errors for Mathematical Advancement (LEMMA). LEMMA constructs data consisting of an incorrect solution with an erroneous step and a reflection connection to a correct solution for fine-tuning. Specifically, we systematically analyze the model-generated error types and introduce an error-type grounded mistake augmentation method to collect diverse and representative errors. Correct solutions are either from fixing the errors or generating a fresh start. Through a model-aware smooth reflection connection, the erroneous solution is transferred to the correct one. By fine-tuning on the constructed dataset, the model is able to self-correct errors autonomously within the generation process without relying on external critique models. Experimental results demonstrate that LEMMA achieves significant performance improvements over other strong baselines.