Less-to-More Generalization: Unlocking More Controllability by In-Context Generation

Shaojin Wu, Mengqi Huang, Wenxu Wu, Yufeng Cheng, Fei Ding, Qian He

2025-04-09

Summary

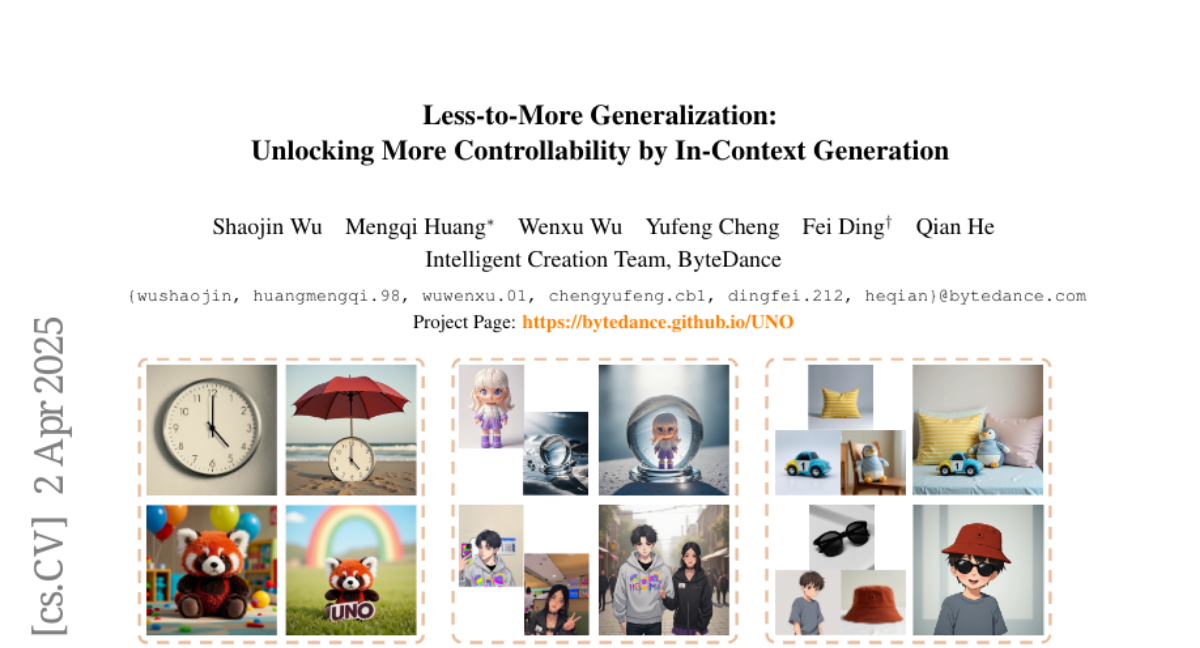

This paper talks about UNO, a new AI tool that helps create images with multiple custom objects (like a scene with your dog and your favorite toy) while keeping everything looking consistent and realistic.

What's the problem?

Current AI image tools struggle to handle multiple custom objects at once, often mixing up details or needing separate setups for each item, which makes creating complex scenes difficult.

What's the solution?

UNO uses a smart training method that teaches AI to work with multiple objects by practicing on AI-generated examples and a special positioning system to keep track of each item’s details.

Why it matters?

This makes it easier for designers and artists to create detailed, personalized images with multiple custom elements, like ads featuring specific products or fantasy scenes with unique characters.

Abstract

Although subject-driven generation has been extensively explored in image generation due to its wide applications, it still has challenges in data scalability and subject expansibility. For the first challenge, moving from curating single-subject datasets to multiple-subject ones and scaling them is particularly difficult. For the second, most recent methods center on single-subject generation, making it hard to apply when dealing with multi-subject scenarios. In this study, we propose a highly-consistent data synthesis pipeline to tackle this challenge. This pipeline harnesses the intrinsic in-context generation capabilities of diffusion transformers and generates high-consistency multi-subject paired data. Additionally, we introduce UNO, which consists of progressive cross-modal alignment and universal rotary position embedding. It is a multi-image conditioned subject-to-image model iteratively trained from a text-to-image model. Extensive experiments show that our method can achieve high consistency while ensuring controllability in both single-subject and multi-subject driven generation.