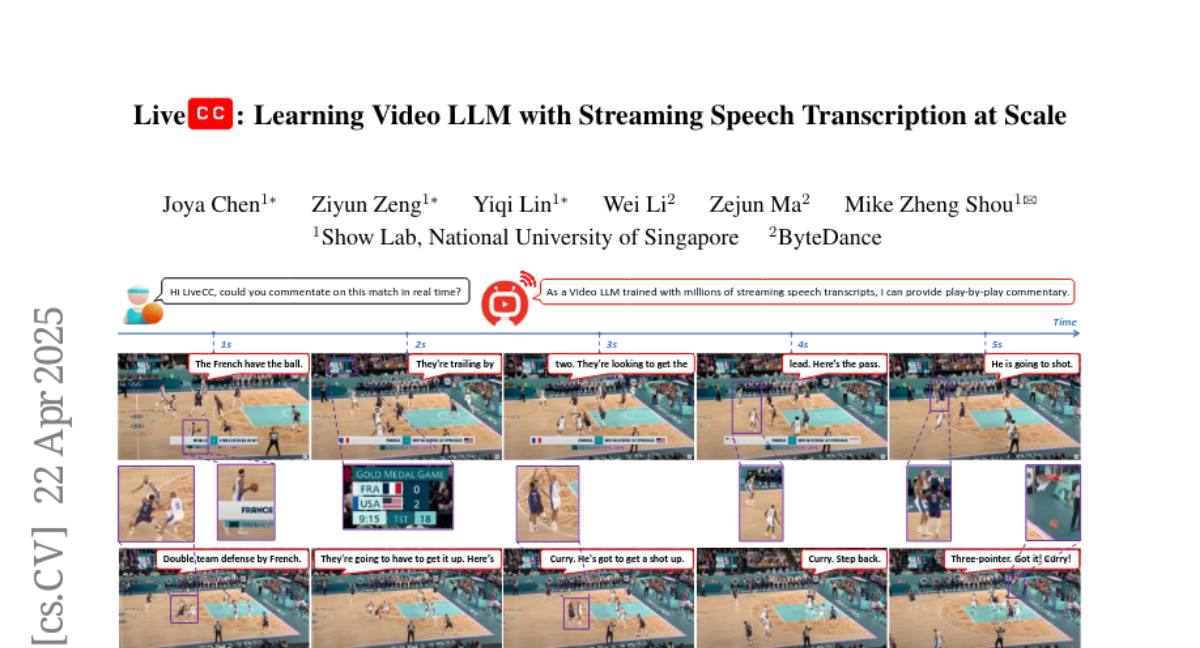

LiveCC: Learning Video LLM with Streaming Speech Transcription at Scale

Joya Chen, Ziyun Zeng, Yiqi Lin, Wei Li, Zejun Ma, Mike Zheng Shou

2025-04-23

Summary

This paper talks about LiveCC, a new way to train large language models to understand and talk about videos by using transcripts from automatic speech recognition systems.

What's the problem?

The problem is that it's really hard for AI to answer questions about videos or provide live commentary, because videos are complex and there usually isn't enough labeled data to train models on how to understand both what’s happening and what’s being said.

What's the solution?

The researchers trained their video language models using huge amounts of speech transcripts created by automatic speech recognition, which allowed the AI to learn from lots of real video content. This helped their model get really good at answering questions about videos and even providing live commentary as the video plays.

Why it matters?

This matters because it opens the door for smarter video assistants, better accessibility tools for people who need help understanding videos, and new ways to interact with video content in real time.

Abstract

Large-scale training for Video LLMs using ASR transcripts enables competitive video QA performance and real-time commentary capabilities.