LiveVQA: Live Visual Knowledge Seeking

Mingyang Fu, Yuyang Peng, Benlin Liu, Yao Wan, Dongping Chen

2025-04-08

Summary

This paper talks about LiveVQA, a test for AI systems that checks if they can answer questions about current news images, like explaining what's happening in a breaking news photo or why it matters.

What's the problem?

Current AI struggles to understand new images from the internet that show latest events, especially when questions require connecting details from both the picture and recent news articles.

What's the solution?

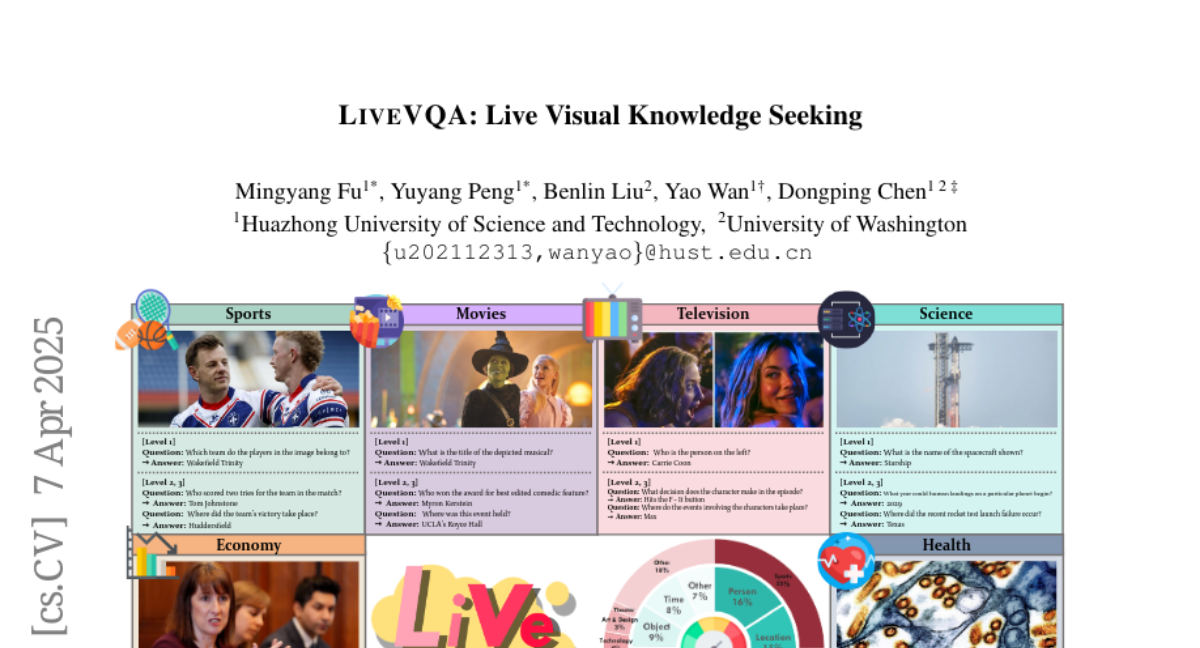

LiveVQA creates a big collection of real news photos with questions (like easy 'what' questions and harder 'why' questions) to train and test AI on understanding fresh visual information.

Why it matters?

This helps build better AI tools for real-time news analysis, fact-checking social media posts, and helping people understand breaking events through images.

Abstract

We introduce LiveVQA, an automatically collected dataset of latest visual knowledge from the Internet with synthesized VQA problems. LiveVQA consists of 3,602 single- and multi-hop visual questions from 6 news websites across 14 news categories, featuring high-quality image-text coherence and authentic information. Our evaluation across 15 MLLMs (e.g., GPT-4o, Gemma-3, and Qwen-2.5-VL family) demonstrates that stronger models perform better overall, with advanced visual reasoning capabilities proving crucial for complex multi-hop questions. Despite excellent performance on textual problems, models with tools like search engines still show significant gaps when addressing visual questions requiring latest visual knowledge, highlighting important areas for future research.