LLaVAction: evaluating and training multi-modal large language models for action recognition

Shaokai Ye, Haozhe Qi, Alexander Mathis, Mackenzie W. Mathis

2025-03-26

Summary

This paper is about testing and improving how well AI models can understand human actions in videos.

What's the problem?

AI models that use both images and text struggle to accurately recognize actions in videos, especially when there are confusing options to choose from.

What's the solution?

The researchers created a new way to test these AI models and then developed methods to improve their ability to recognize actions, achieving better results than existing models.

Why it matters?

This work matters because it can lead to AI systems that better understand human behavior, which could be useful for things like video analysis, robotics, and healthcare.

Abstract

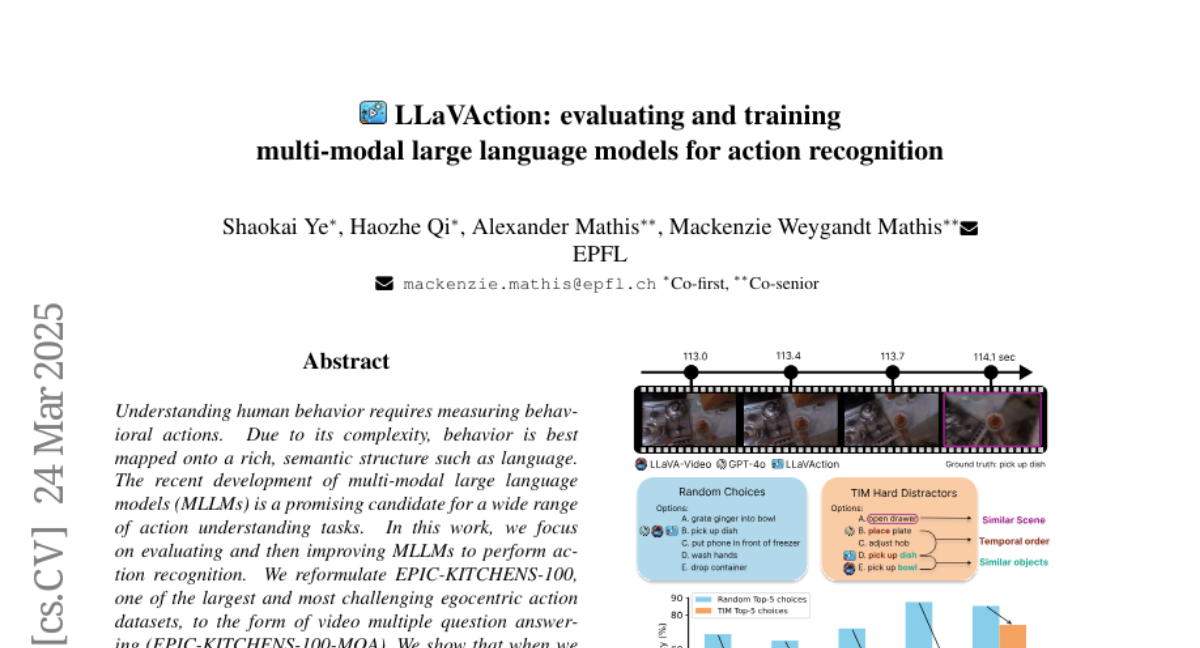

Understanding human behavior requires measuring behavioral actions. Due to its complexity, behavior is best mapped onto a rich, semantic structure such as language. The recent development of multi-modal large language models (MLLMs) is a promising candidate for a wide range of action understanding tasks. In this work, we focus on evaluating and then improving MLLMs to perform action recognition. We reformulate EPIC-KITCHENS-100, one of the largest and most challenging egocentric action datasets, to the form of video multiple question answering (EPIC-KITCHENS-100-MQA). We show that when we sample difficult incorrect answers as distractors, leading MLLMs struggle to recognize the correct actions. We propose a series of methods that greatly improve the MLLMs' ability to perform action recognition, achieving state-of-the-art on both the EPIC-KITCHENS-100 validation set, as well as outperforming GPT-4o by 21 points in accuracy on EPIC-KITCHENS-100-MQA. Lastly, we show improvements on other action-related video benchmarks such as EgoSchema, PerceptionTest, LongVideoBench, VideoMME and MVBench, suggesting that MLLMs are a promising path forward for complex action tasks. Code and models are available at: https://github.com/AdaptiveMotorControlLab/LLaVAction.