Long-Context Autoregressive Video Modeling with Next-Frame Prediction

Yuchao Gu, Weijia Mao, Mike Zheng Shou

2025-03-26

Summary

This paper is about improving how AI generates videos by helping it remember and use information from much longer stretches of the video.

What's the problem?

AI models that generate videos often struggle to keep the video consistent and make sense over longer periods of time. They have trouble remembering what happened earlier in the video and using that information to generate what comes next.

What's the solution?

The researchers developed a new method called Frame AutoRegressive (FAR) that helps the AI model remember more and use that information to generate better videos. They also came up with ways to make the AI focus on both the nearby details and the overall structure of the video.

Why it matters?

This work matters because it can lead to AI that generates more realistic, coherent, and engaging videos, which could be useful for things like creating movies, video games, and virtual reality experiences.

Abstract

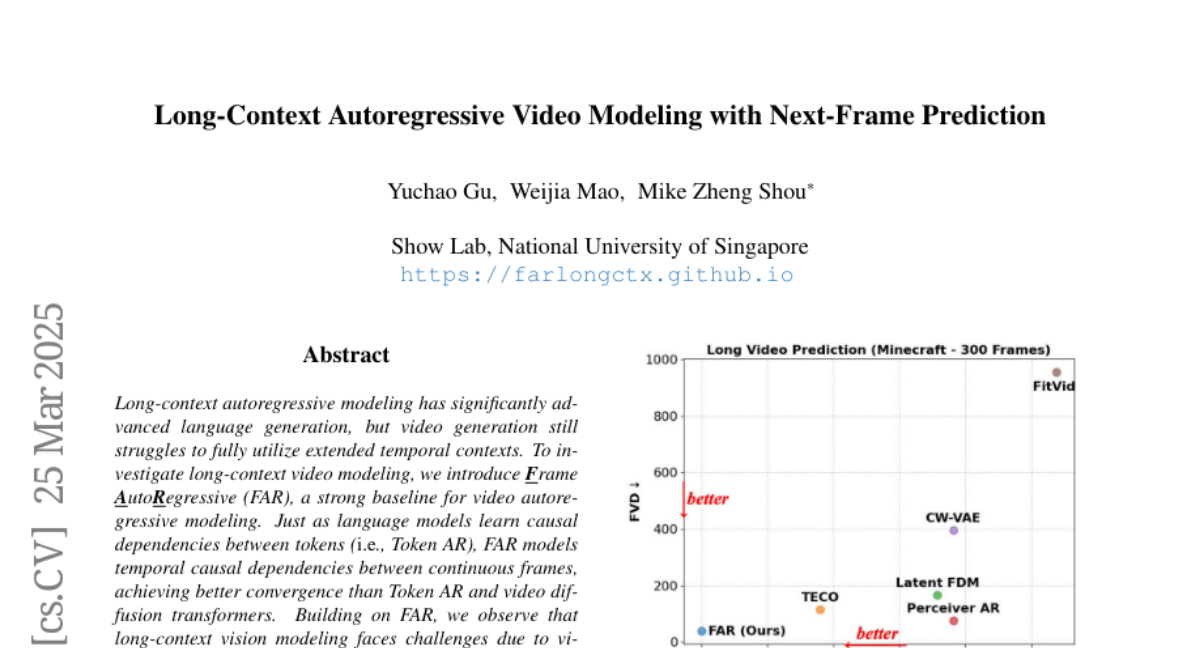

Long-context autoregressive modeling has significantly advanced language generation, but video generation still struggles to fully utilize extended temporal contexts. To investigate long-context video modeling, we introduce Frame AutoRegressive (FAR), a strong baseline for video autoregressive modeling. Just as language models learn causal dependencies between tokens (i.e., Token AR), FAR models temporal causal dependencies between continuous frames, achieving better convergence than Token AR and video diffusion transformers. Building on FAR, we observe that long-context vision modeling faces challenges due to visual redundancy. Existing RoPE lacks effective temporal decay for remote context and fails to extrapolate well to long video sequences. Additionally, training on long videos is computationally expensive, as vision tokens grow much faster than language tokens. To tackle these issues, we propose balancing locality and long-range dependency. We introduce FlexRoPE, an test-time technique that adds flexible temporal decay to RoPE, enabling extrapolation to 16x longer vision contexts. Furthermore, we propose long short-term context modeling, where a high-resolution short-term context window ensures fine-grained temporal consistency, while an unlimited long-term context window encodes long-range information using fewer tokens. With this approach, we can train on long video sequences with a manageable token context length. We demonstrate that FAR achieves state-of-the-art performance in both short- and long-video generation, providing a simple yet effective baseline for video autoregressive modeling.