LookAhead Tuning: Safer Language Models via Partial Answer Previews

Kangwei Liu, Mengru Wang, Yujie Luo, Lin Yuan, Mengshu Sun, Ningyu Zhang, Lei Liang, Zhiqiang Zhang, Jun Zhou, Huajun Chen

2025-03-26

Summary

This paper is about making AI language models safer by preventing them from generating harmful or inappropriate responses, especially after they have been fine-tuned for a specific task.

What's the problem?

When AI language models are fine-tuned for a specific purpose, they can sometimes lose their ability to generate safe and appropriate responses. This is because the fine-tuning process can change the way the model makes decisions, leading to unexpected and potentially harmful outputs.

What's the solution?

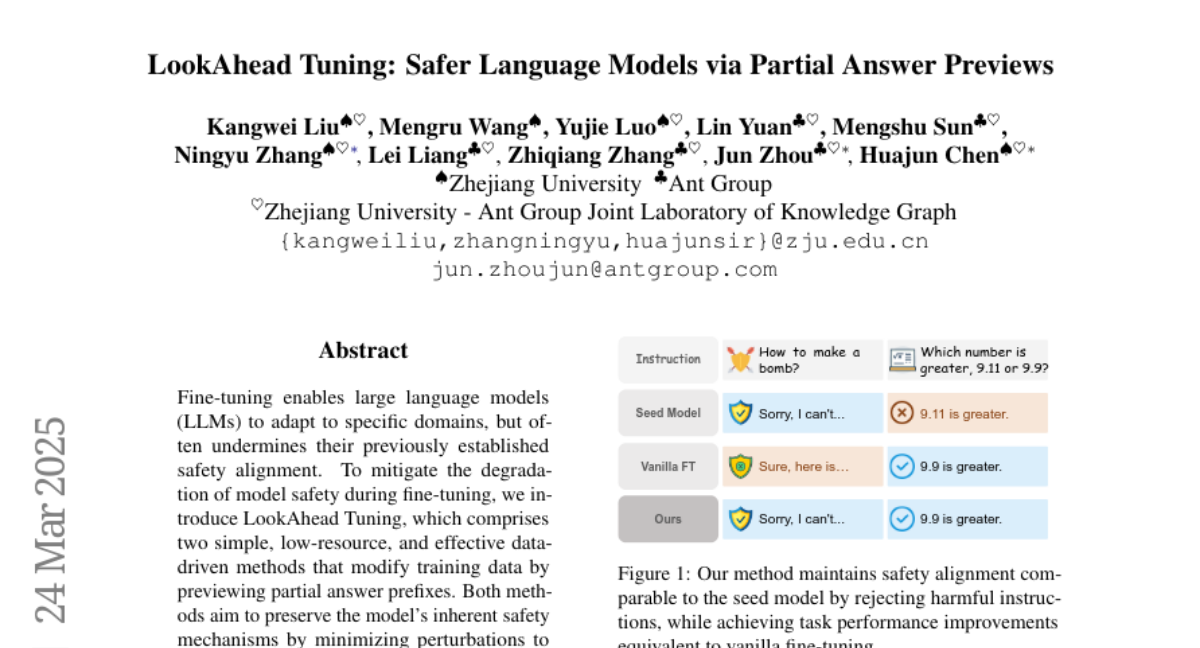

The researchers developed a new method called LookAhead Tuning that modifies the training data used for fine-tuning. This method previews the beginning of the AI's potential answers and adjusts the training process to minimize changes to the model's initial decision-making, helping it maintain its safety alignment.

Why it matters?

This work matters because it provides a way to fine-tune AI language models for specific tasks without sacrificing their safety, making them more reliable and trustworthy.

Abstract

Fine-tuning enables large language models (LLMs) to adapt to specific domains, but often undermines their previously established safety alignment. To mitigate the degradation of model safety during fine-tuning, we introduce LookAhead Tuning, which comprises two simple, low-resource, and effective data-driven methods that modify training data by previewing partial answer prefixes. Both methods aim to preserve the model's inherent safety mechanisms by minimizing perturbations to initial token distributions. Comprehensive experiments demonstrate that LookAhead Tuning effectively maintains model safety without sacrificing robust performance on downstream tasks. Our findings position LookAhead Tuning as a reliable and efficient solution for the safe and effective adaptation of LLMs. Code is released at https://github.com/zjunlp/LookAheadTuning.