LPOSS: Label Propagation Over Patches and Pixels for Open-vocabulary Semantic Segmentation

Vladan Stojnić, Yannis Kalantidis, Jiří Matas, Giorgos Tolias

2025-03-26

Summary

This paper is about teaching computers to understand what's in a picture, even if they haven't been specifically trained to recognize those things.

What's the problem?

Existing AI models are good at recognizing objects they've been trained on, but they struggle with new or unusual objects.

What's the solution?

The researchers developed a new method that helps the AI connect the dots between different parts of the image, allowing it to recognize objects based on their relationships to each other, even if the AI has never seen them before.

Why it matters?

This work matters because it can make AI vision systems more adaptable and useful in real-world situations where they might encounter unfamiliar objects.

Abstract

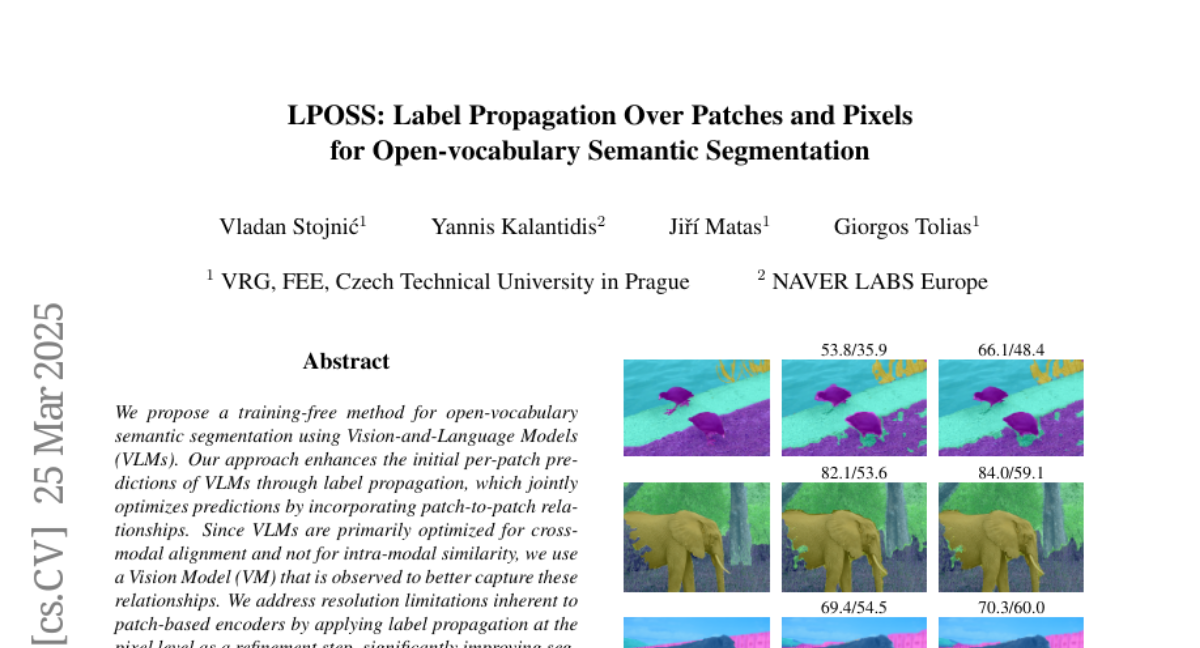

We propose a training-free method for open-vocabulary semantic segmentation using Vision-and-Language Models (VLMs). Our approach enhances the initial per-patch predictions of VLMs through label propagation, which jointly optimizes predictions by incorporating patch-to-patch relationships. Since VLMs are primarily optimized for cross-modal alignment and not for intra-modal similarity, we use a Vision Model (VM) that is observed to better capture these relationships. We address resolution limitations inherent to patch-based encoders by applying label propagation at the pixel level as a refinement step, significantly improving segmentation accuracy near class boundaries. Our method, called LPOSS+, performs inference over the entire image, avoiding window-based processing and thereby capturing contextual interactions across the full image. LPOSS+ achieves state-of-the-art performance among training-free methods, across a diverse set of datasets. Code: https://github.com/vladan-stojnic/LPOSS