Make Your Training Flexible: Towards Deployment-Efficient Video Models

Chenting Wang, Kunchang Li, Tianxiang Jiang, Xiangyu Zeng, Yi Wang, Limin Wang

2025-03-21

Summary

This paper is about making AI video models more efficient and adaptable, so they can be used in more real-world situations.

What's the problem?

Current AI video models are often trained on a fixed set of data, which makes them less accurate and harder to use when computing power is limited.

What's the solution?

The researchers developed a new method that allows the AI to choose the most important parts of the video to focus on during training, making it more robust and efficient.

Why it matters?

This work matters because it makes AI video models more practical and useful for a wider range of applications.

Abstract

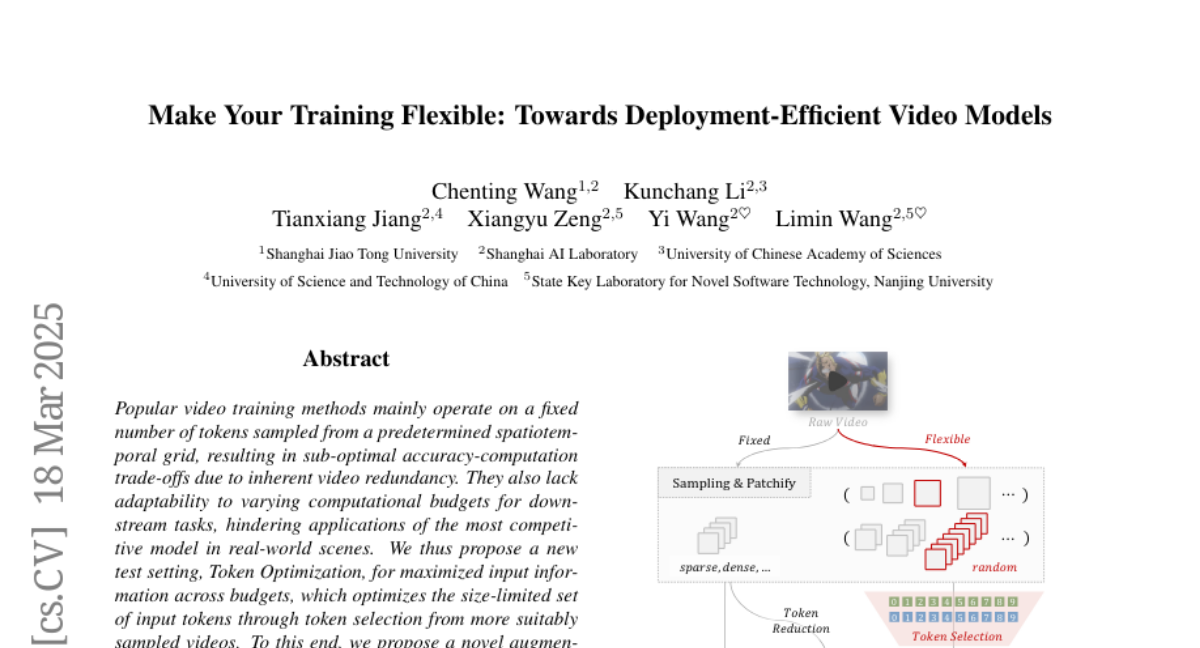

Popular video training methods mainly operate on a fixed number of tokens sampled from a predetermined spatiotemporal grid, resulting in sub-optimal accuracy-computation trade-offs due to inherent video redundancy. They also lack adaptability to varying computational budgets for downstream tasks, hindering applications of the most competitive model in real-world scenes. We thus propose a new test setting, Token Optimization, for maximized input information across budgets, which optimizes the size-limited set of input tokens through token selection from more suitably sampled videos. To this end, we propose a novel augmentation tool termed Flux. By making the sampling grid flexible and leveraging token selection, it is easily adopted in most popular video training frameworks, boosting model robustness with nearly no additional cost. We integrate Flux in large-scale video pre-training, and the resulting FluxViT establishes new state-of-the-art results across extensive tasks at standard costs. Notably, with 1/4 tokens only, it can still match the performance of previous state-of-the-art models with Token Optimization, yielding nearly 90\% savings. All models and data are available at https://github.com/OpenGVLab/FluxViT.