Mamba as a Bridge: Where Vision Foundation Models Meet Vision Language Models for Domain-Generalized Semantic Segmentation

Xin Zhang, Robby T. Tan

2025-04-08

Summary

This paper talks about MFuser, a smart AI tool that combines two types of image-understanding models to better recognize objects in photos, even when the images come from very different sources like cartoons or security cameras.

What's the problem?

Current AI models either focus too much on tiny details (missing the big picture) or only understand general descriptions (missing specific parts), and combining them slows down processing.

What's the solution?

MFuser uses a special 'Mamba' system to merge the best parts of both models - the detail-focused and the big-picture ones - while keeping calculations fast and efficient.

Why it matters?

This helps AI systems work reliably across different types of images, useful for things like self-driving cars that need to recognize objects in any environment or medical tools analyzing scans from various machines.

Abstract

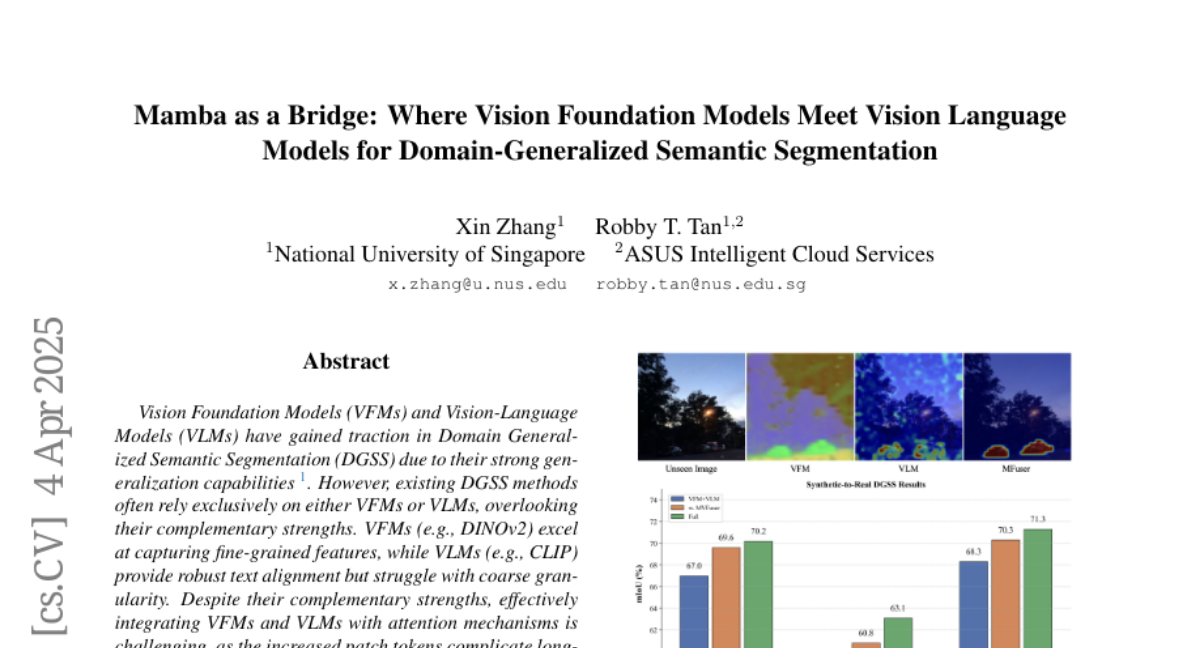

Vision Foundation Models (VFMs) and Vision-Language Models (VLMs) have gained traction in Domain Generalized Semantic Segmentation (DGSS) due to their strong generalization capabilities. However, existing DGSS methods often rely exclusively on either VFMs or VLMs, overlooking their complementary strengths. VFMs (e.g., DINOv2) excel at capturing fine-grained features, while VLMs (e.g., CLIP) provide robust text alignment but struggle with coarse granularity. Despite their complementary strengths, effectively integrating VFMs and VLMs with attention mechanisms is challenging, as the increased patch tokens complicate long-sequence modeling. To address this, we propose MFuser, a novel Mamba-based fusion framework that efficiently combines the strengths of VFMs and VLMs while maintaining linear scalability in sequence length. MFuser consists of two key components: MVFuser, which acts as a co-adapter to jointly fine-tune the two models by capturing both sequential and spatial dynamics; and MTEnhancer, a hybrid attention-Mamba module that refines text embeddings by incorporating image priors. Our approach achieves precise feature locality and strong text alignment without incurring significant computational overhead. Extensive experiments demonstrate that MFuser significantly outperforms state-of-the-art DGSS methods, achieving 68.20 mIoU on synthetic-to-real and 71.87 mIoU on real-to-real benchmarks. The code is available at https://github.com/devinxzhang/MFuser.