Mamba-YOLO-World: Marrying YOLO-World with Mamba for Open-Vocabulary Detection

Haoxuan Wang, Qingdong He, Jinlong Peng, Hao Yang, Mingmin Chi, Yabiao Wang

2024-09-16

Summary

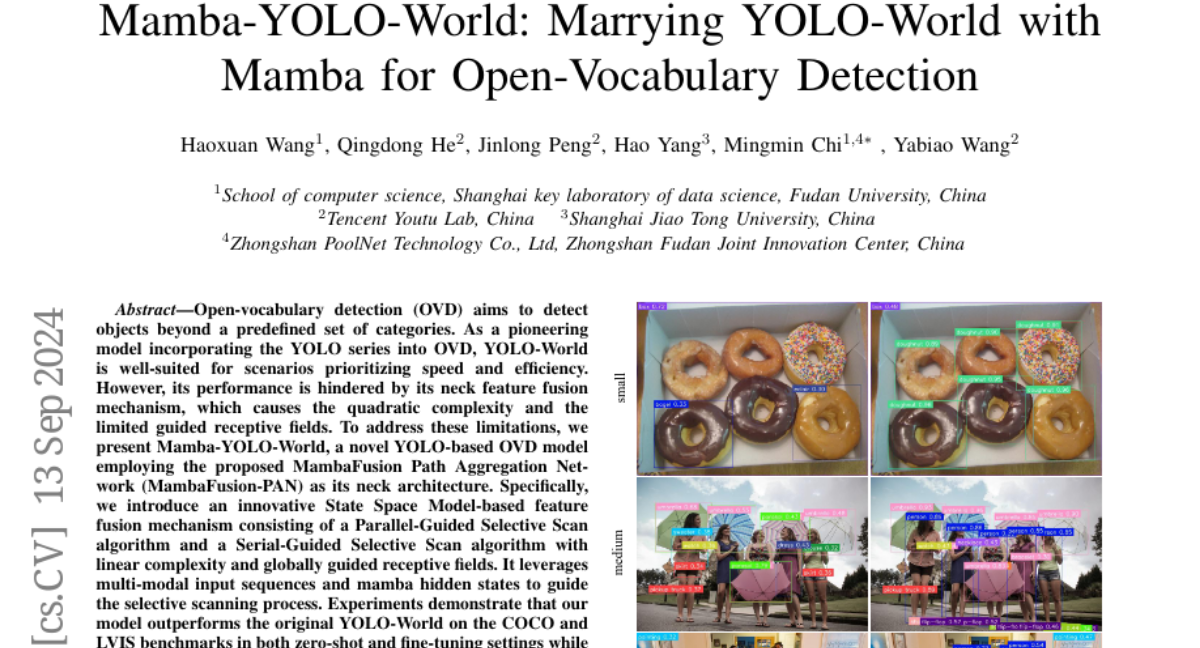

This paper introduces Mamba-YOLO-World, a new model that combines the YOLO-World object detection system with a method called MambaFusion to improve the detection of objects that aren't part of a fixed list of categories.

What's the problem?

Open-vocabulary detection (OVD) is challenging because traditional models are limited to detecting only predefined categories. YOLO-World is fast and efficient but struggles with a feature fusion mechanism that can slow it down and reduce its effectiveness when identifying objects in different views or lighting conditions.

What's the solution?

Mamba-YOLO-World enhances YOLO-World by using a new feature fusion technique called MambaFusion Path Aggregation Network (MambaFusion-PAN). This method uses two algorithms, Parallel-Guided Selective Scan and Serial-Guided Selective Scan, to efficiently combine features from different views without the complexity that slows down other models. This allows for better detection of objects in various conditions while maintaining speed and efficiency.

Why it matters?

This research is important because it improves how AI can recognize and classify objects in real-time, even if those objects weren't specifically included in its training. This capability is crucial for applications like robotics, surveillance, and autonomous vehicles, where recognizing a wide range of objects quickly and accurately is essential.

Abstract

Open-vocabulary detection (OVD) aims to detect objects beyond a predefined set of categories. As a pioneering model incorporating the YOLO series into OVD, YOLO-World is well-suited for scenarios prioritizing speed and efficiency.However, its performance is hindered by its neck feature fusion mechanism, which causes the quadratic complexity and the limited guided receptive fields.To address these limitations, we present Mamba-YOLO-World, a novel YOLO-based OVD model employing the proposed MambaFusion Path Aggregation Network (MambaFusion-PAN) as its neck architecture. Specifically, we introduce an innovative State Space Model-based feature fusion mechanism consisting of a Parallel-Guided Selective Scan algorithm and a Serial-Guided Selective Scan algorithm with linear complexity and globally guided receptive fields. It leverages multi-modal input sequences and mamba hidden states to guide the selective scanning process.Experiments demonstrate that our model outperforms the original YOLO-World on the COCO and LVIS benchmarks in both zero-shot and fine-tuning settings while maintaining comparable parameters and FLOPs. Additionally, it surpasses existing state-of-the-art OVD methods with fewer parameters and FLOPs.