MAPS: A Multi-Agent Framework Based on Big Seven Personality and Socratic Guidance for Multimodal Scientific Problem Solving

Jian Zhang, Zhiyuan Wang, Zhangqi Wang, Xinyu Zhang, Fangzhi Xu, Qika Lin, Rui Mao, Erik Cambria, Jun Liu

2025-03-24

Summary

This paper introduces a new AI system that uses different AI 'agents' with unique personalities to solve science problems that involve both text and diagrams.

What's the problem?

It's difficult for AI to solve complex science problems that require understanding information from multiple sources, like text and images, and to think critically about the problem.

What's the solution?

The researchers created a system where different AI agents with different personalities work together to solve the problem. One agent acts as a 'critic' and uses questions to encourage the other agents to think more deeply.

Why it matters?

This work matters because it can help AI become better at solving complex scientific problems, which could lead to new discoveries and innovations.

Abstract

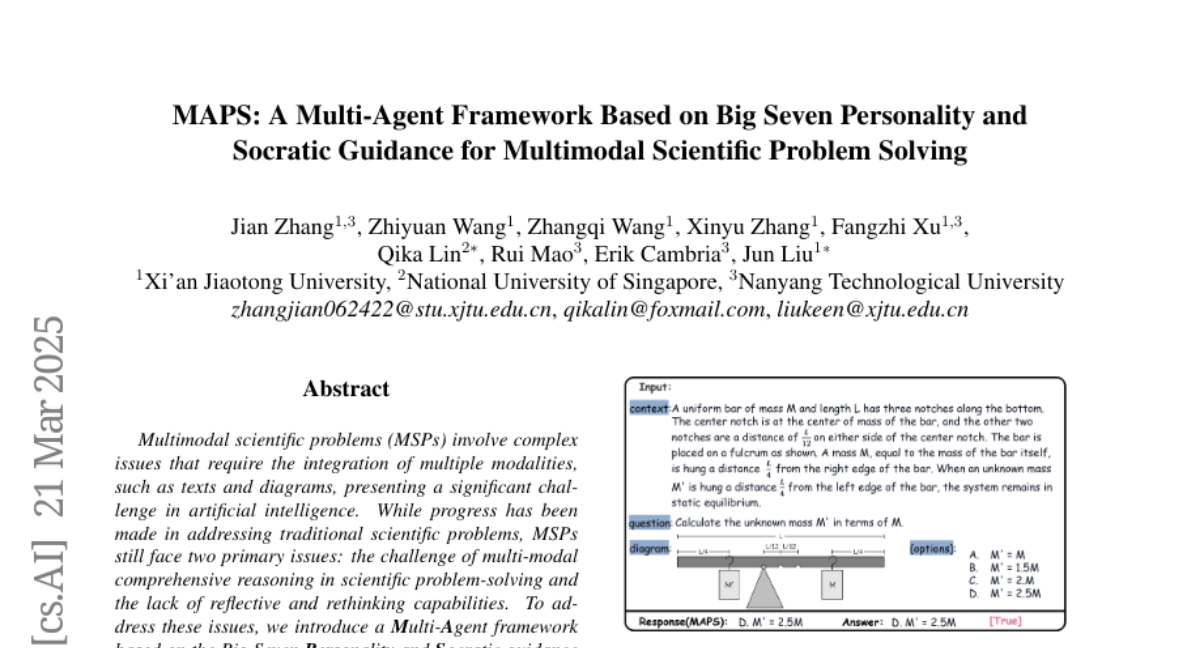

Multimodal scientific problems (MSPs) involve complex issues that require the integration of multiple modalities, such as text and diagrams, presenting a significant challenge in artificial intelligence. While progress has been made in addressing traditional scientific problems, MSPs still face two primary issues: the challenge of multi-modal comprehensive reasoning in scientific problem-solving and the lack of reflective and rethinking capabilities. To address these issues, we introduce a Multi-Agent framework based on the Big Seven Personality and Socratic guidance (MAPS). This framework employs seven distinct agents that leverage feedback mechanisms and the Socratic method to guide the resolution of MSPs. To tackle the first issue, we propose a progressive four-agent solving strategy, where each agent focuses on a specific stage of the problem-solving process. For the second issue, we introduce a Critic agent, inspired by Socratic questioning, which prompts critical thinking and stimulates autonomous learning. We conduct extensive experiments on the EMMA, Olympiad, and MathVista datasets, achieving promising results that outperform the current SOTA model by 15.84% across all tasks. Meanwhile, the additional analytical experiments also verify the model's progress as well as generalization ability.