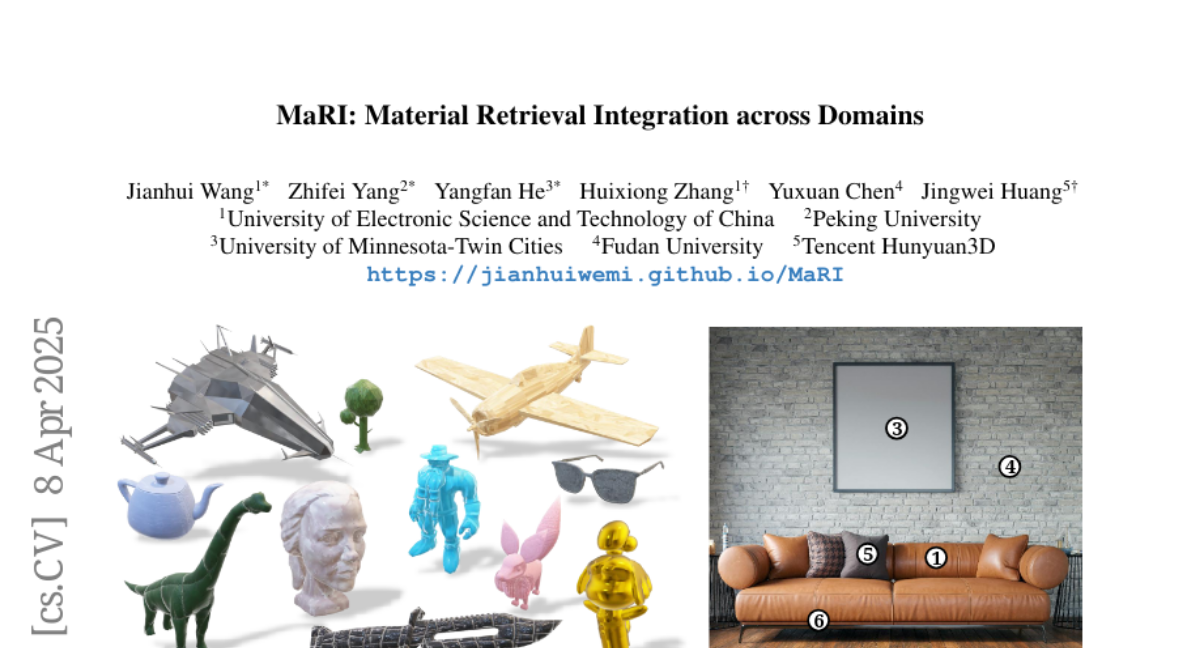

MaRI: Material Retrieval Integration across Domains

Jianhui Wang, Zhifei Yang, Yangfan He, Huixiong Zhang, Yuxuan Chen, Jingwei Huang

2025-03-17

Summary

This is a collection of research paper titles related to recent advances and challenges in AI, spanning areas like image and video generation, language models, robotics, and safety.

What's the problem?

The problems addressed include improving the efficiency and quality of AI-generated content, enhancing the reasoning abilities of AI models, mitigating biases and safety risks, and enabling AI to better interact with the real world.

What's the solution?

The solutions involve developing new models, training techniques, benchmarks, and evaluation methods. These include innovations in diffusion models, transformers, reinforcement learning, and multimodal learning. Specific solutions focus on improving image compression (PerCoV2), generating consistent videos (CINEMA, Long Context Tuning), enabling robots to navigate and manipulate objects (UniGoal, adversarial data collection), and mitigating toxicity in online discussions (Silent Is Not Actually Silent).

Why it matters?

These advancements are important because they push the boundaries of AI capabilities, making AI more powerful, reliable, and beneficial for various applications. They also address critical challenges related to safety, fairness, and transparency, ensuring that AI is developed and deployed responsibly.

Abstract

Accurate material retrieval is critical for creating realistic 3D assets. Existing methods rely on datasets that capture shape-invariant and lighting-varied representations of materials, which are scarce and face challenges due to limited diversity and inadequate real-world generalization. Most current approaches adopt traditional image search techniques. They fall short in capturing the unique properties of material spaces, leading to suboptimal performance in retrieval tasks. Addressing these challenges, we introduce MaRI, a framework designed to bridge the feature space gap between synthetic and real-world materials. MaRI constructs a shared embedding space that harmonizes visual and material attributes through a contrastive learning strategy by jointly training an image and a material encoder, bringing similar materials and images closer while separating dissimilar pairs within the feature space. To support this, we construct a comprehensive dataset comprising high-quality synthetic materials rendered with controlled shape variations and diverse lighting conditions, along with real-world materials processed and standardized using material transfer techniques. Extensive experiments demonstrate the superior performance, accuracy, and generalization capabilities of MaRI across diverse and complex material retrieval tasks, outperforming existing methods.