Masking in Multi-hop QA: An Analysis of How Language Models Perform with Context Permutation

Wenyu Huang, Pavlos Vougiouklis, Mirella Lapata, Jeff Z. Pan

2025-05-21

Summary

This paper talks about how different types of AI models handle tough questions that require using information from several places, and how shuffling the order of that information can affect their answers.

What's the problem?

AI sometimes struggles with multi-hop question answering, which means answering questions that need facts from more than one source, especially when the order of information changes or isn't straightforward.

What's the solution?

The researchers found that encoder-decoder models, which can look at information in both directions, do better at these complex questions than models that only read information one way, especially when the order of the facts is mixed up.

Why it matters?

This matters because it helps us build smarter AI systems that can handle complicated questions more accurately, which is useful for things like research, homework help, and searching for information online.

Abstract

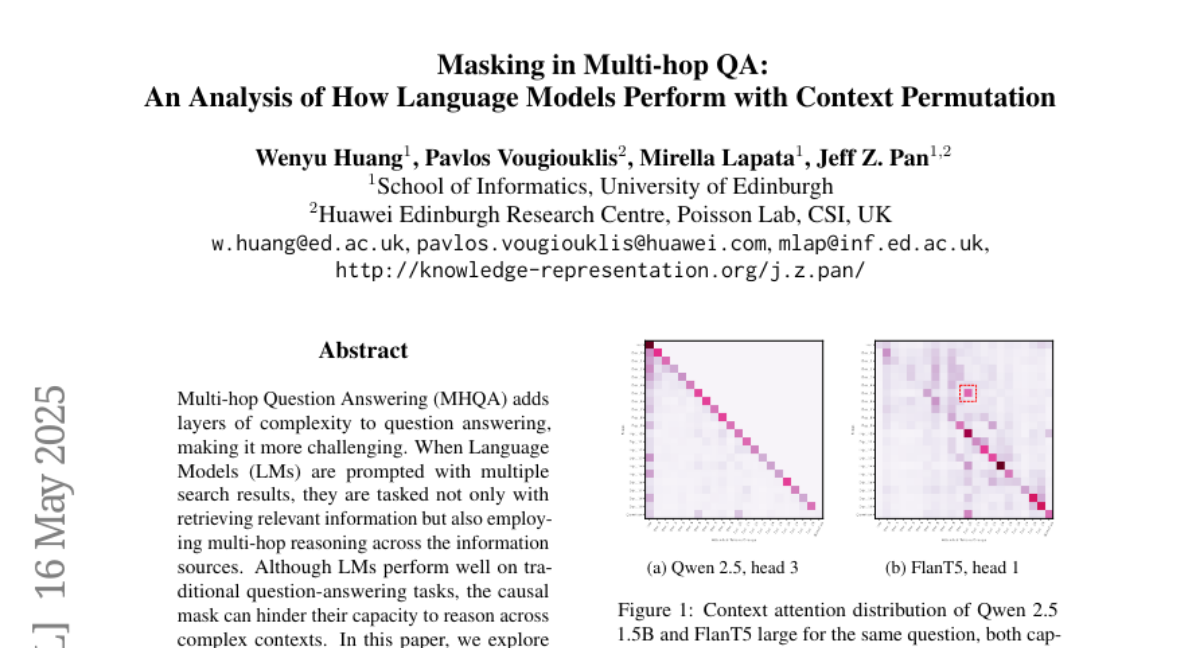

Encoder-decoder models outperform causal decoder-only models in multi-hop question answering by leveraging permutation of search results and bi-directional attention.