Material Anything: Generating Materials for Any 3D Object via Diffusion

Xin Huang, Tengfei Wang, Ziwei Liu, Qing Wang

2024-11-25

Summary

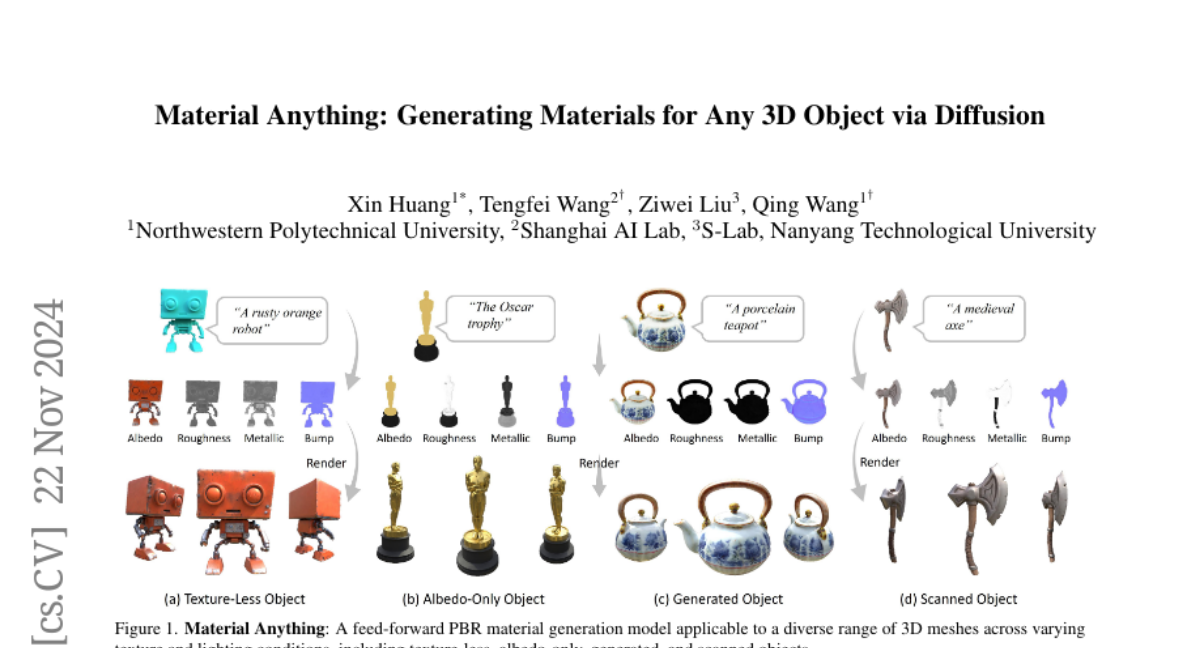

This paper presents Material Anything, a new automated system that generates realistic materials for 3D objects using a technique called diffusion, which adapts to different lighting conditions and object types.

What's the problem?

Existing methods for creating materials for 3D objects are often complicated and not very flexible. They struggle to work well with different lighting conditions and types of objects, such as those without textures or with only basic colors. This limits the ability to create high-quality, realistic materials that can be used in various applications.

What's the solution?

Material Anything addresses these issues by using a unified diffusion framework that simplifies the process of generating materials. It employs a pre-trained image diffusion model enhanced with a special triple-head architecture, which helps improve the quality and stability of the materials produced. The system uses 'confidence masks' to determine how to handle different types of objects and lighting scenarios, allowing it to generate consistent and ready-to-use materials efficiently. The method includes a two-step process: first generating materials in image space and then refining them in UV space for better quality.

Why it matters?

This research is important because it provides an easy-to-use solution for generating high-quality materials for 3D objects, making it more accessible for artists and developers in fields like gaming, animation, and virtual reality. By improving how materials are created, it enhances the realism of digital content and allows for more creative possibilities in 3D modeling.

Abstract

We present Material Anything, a fully-automated, unified diffusion framework designed to generate physically-based materials for 3D objects. Unlike existing methods that rely on complex pipelines or case-specific optimizations, Material Anything offers a robust, end-to-end solution adaptable to objects under diverse lighting conditions. Our approach leverages a pre-trained image diffusion model, enhanced with a triple-head architecture and rendering loss to improve stability and material quality. Additionally, we introduce confidence masks as a dynamic switcher within the diffusion model, enabling it to effectively handle both textured and texture-less objects across varying lighting conditions. By employing a progressive material generation strategy guided by these confidence masks, along with a UV-space material refiner, our method ensures consistent, UV-ready material outputs. Extensive experiments demonstrate our approach outperforms existing methods across a wide range of object categories and lighting conditions.