MDocAgent: A Multi-Modal Multi-Agent Framework for Document Understanding

Siwei Han, Peng Xia, Ruiyi Zhang, Tong Sun, Yun Li, Hongtu Zhu, Huaxiu Yao

2025-03-26

Summary

This paper talks about making AI better at understanding documents by using multiple AI agents that work together, looking at both the text and images in the document.

What's the problem?

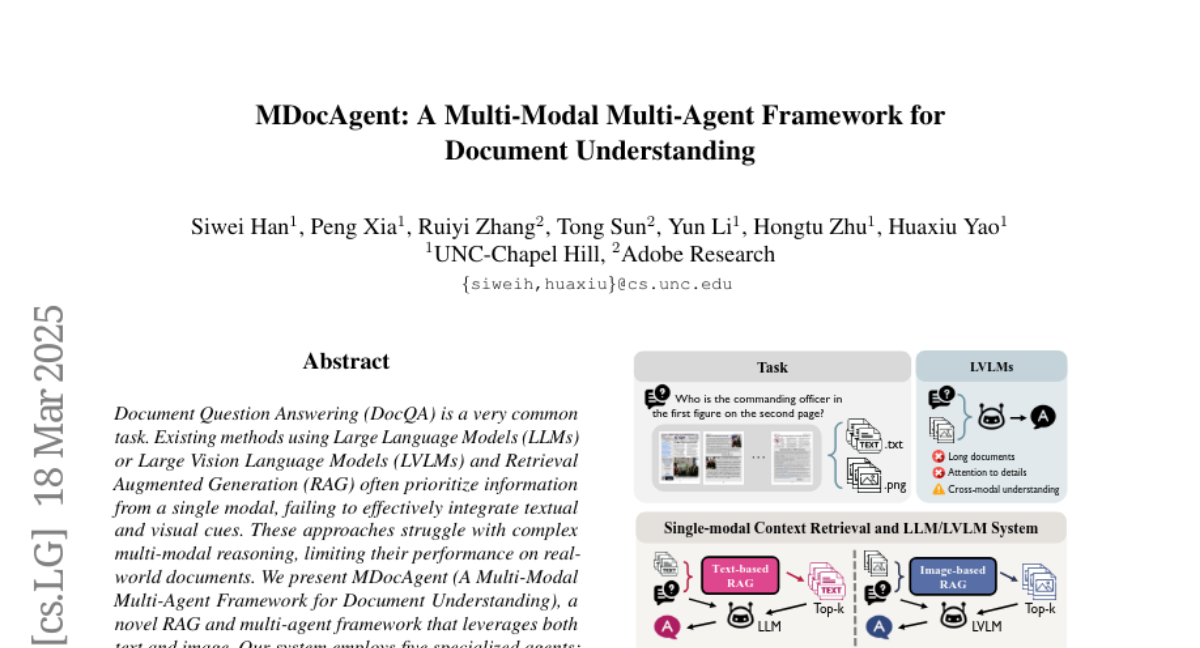

Current AI models often struggle to understand documents fully because they focus on either the text or the images, but not both at the same time. This makes it hard to answer questions about documents that have complex layouts and information presented in different ways.

What's the solution?

The researchers created a new system called MDocAgent that uses several AI agents, each with a specific job, like reading the text, looking at the images, and summarizing the information. These agents work together to understand the document and answer questions more accurately.

Why it matters?

This work matters because it can lead to AI systems that are better at understanding complex documents, which is useful for things like customer service, information retrieval, and automated document processing.

Abstract

Document Question Answering (DocQA) is a very common task. Existing methods using Large Language Models (LLMs) or Large Vision Language Models (LVLMs) and Retrieval Augmented Generation (RAG) often prioritize information from a single modal, failing to effectively integrate textual and visual cues. These approaches struggle with complex multi-modal reasoning, limiting their performance on real-world documents. We present MDocAgent (A Multi-Modal Multi-Agent Framework for Document Understanding), a novel RAG and multi-agent framework that leverages both text and image. Our system employs five specialized agents: a general agent, a critical agent, a text agent, an image agent and a summarizing agent. These agents engage in multi-modal context retrieval, combining their individual insights to achieve a more comprehensive understanding of the document's content. This collaborative approach enables the system to synthesize information from both textual and visual components, leading to improved accuracy in question answering. Preliminary experiments on five benchmarks like MMLongBench, LongDocURL demonstrate the effectiveness of our MDocAgent, achieve an average improvement of 12.1% compared to current state-of-the-art method. This work contributes to the development of more robust and comprehensive DocQA systems capable of handling the complexities of real-world documents containing rich textual and visual information. Our data and code are available at https://github.com/aiming-lab/MDocAgent.