Memory-Efficient Visual Autoregressive Modeling with Scale-Aware KV Cache Compression

Kunjun Li, Zigeng Chen, Cheng-Yen Yang, Jenq-Neng Hwang

2025-05-27

Summary

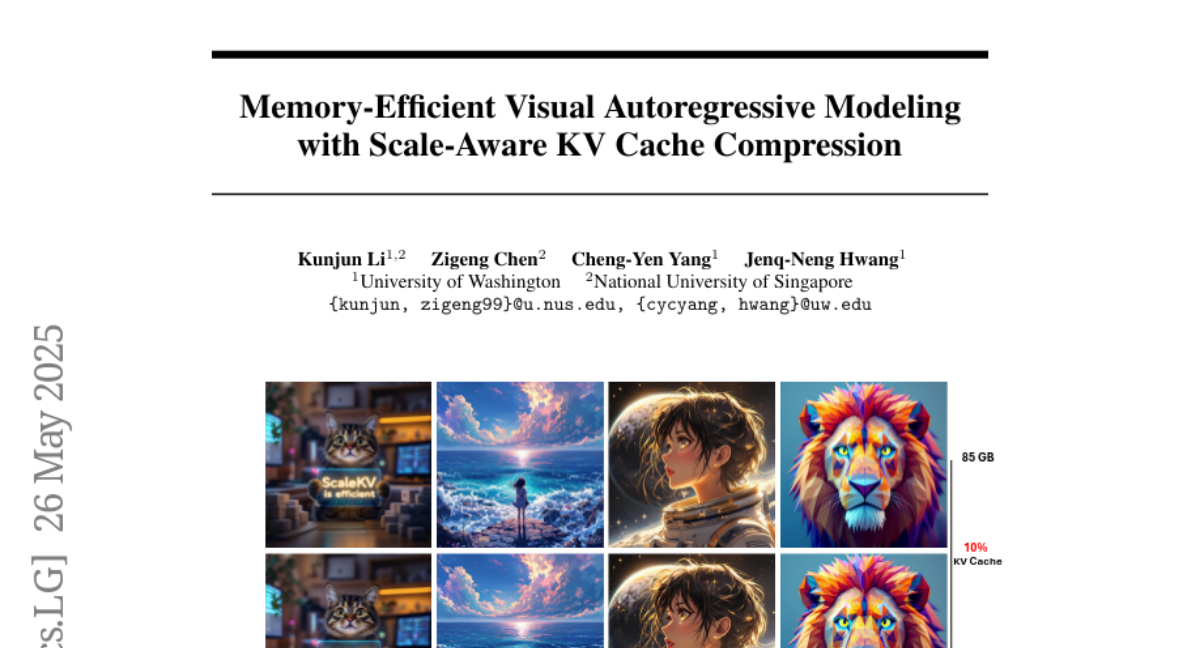

This paper talks about a new method called ScaleKV that helps computer models generate images more efficiently by using less memory. The method works by compressing the parts of the model that store information as it creates an image, making the process faster and less demanding on computer resources without losing quality.

What's the problem?

The problem is that visual autoregressive models, which are used to generate images step by step, need to remember a lot of information at each stage. This memory requirement can get really big, making it hard to use these models on regular computers or for large projects.

What's the solution?

The authors solve this by introducing ScaleKV, which compresses the memory storage in the model. It does this by treating different parts of the model, called drafters and refiners, in a special way across the layers of the network. This smart compression lets the model use much less memory while still making high-quality images.

Why it matters?

This is important because it allows powerful image-generating AI to run on devices with less memory, making this technology more available and practical for more people and applications. It can help artists, designers, and anyone who wants to create images with AI, even if they don't have access to super powerful computers.

Abstract

ScaleKV compresses the KV cache in Visual Autoregressive models by differentiating drafters and refiners across transformer layers, reducing memory consumption while maintaining high fidelity.