MergeVQ: A Unified Framework for Visual Generation and Representation with Disentangled Token Merging and Quantization

Siyuan Li, Luyuan Zhang, Zedong Wang, Juanxi Tian, Cheng Tan, Zicheng Liu, Chang Yu, Qingsong Xie, Haonan Lu, Haoqian Wang, Zhen Lei

2025-04-03

Summary

This paper is about creating a better way to generate and understand images using AI, by combining different techniques into one system.

What's the problem?

It's hard to create AI models that are both good at generating realistic images and understanding what's in them, while also being efficient.

What's the solution?

The researchers developed MergeVQ, which combines different AI techniques to achieve both high-quality image generation and good image understanding, while also being faster and more efficient.

Why it matters?

This work matters because it can lead to more powerful AI systems that can create and understand images in a more efficient way.

Abstract

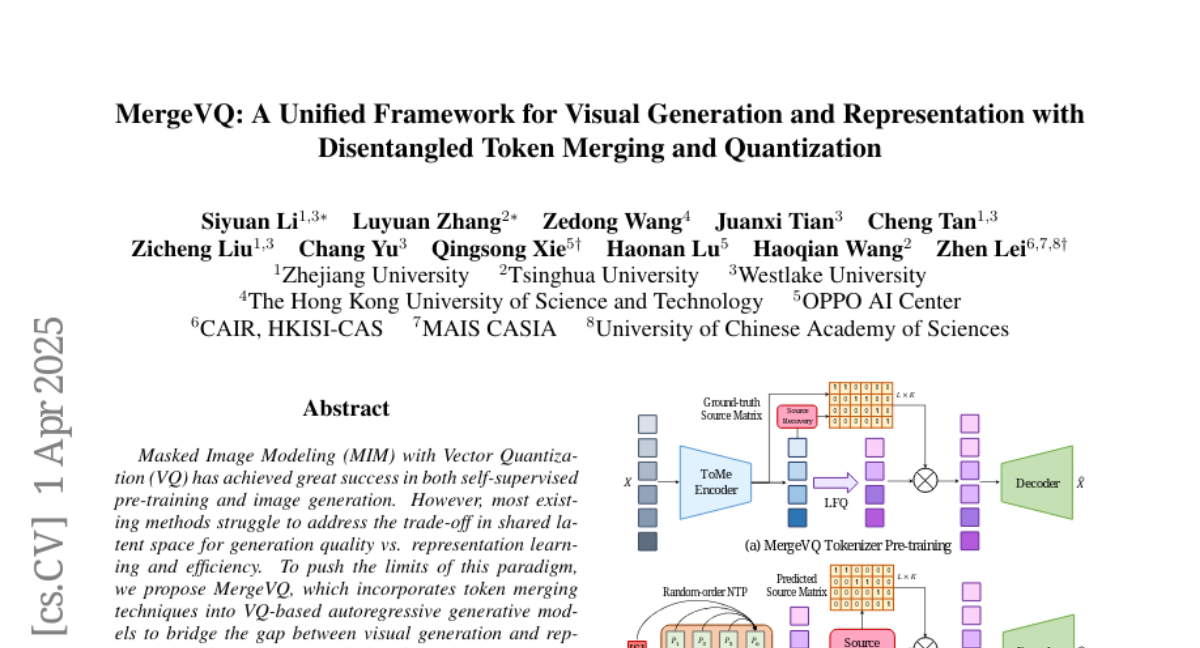

Masked Image Modeling (MIM) with Vector Quantization (VQ) has achieved great success in both self-supervised pre-training and image generation. However, most existing methods struggle to address the trade-off in shared latent space for generation quality vs. representation learning and efficiency. To push the limits of this paradigm, we propose MergeVQ, which incorporates token merging techniques into VQ-based generative models to bridge the gap between image generation and visual representation learning in a unified architecture. During pre-training, MergeVQ decouples top-k semantics from latent space with the token merge module after self-attention blocks in the encoder for subsequent Look-up Free Quantization (LFQ) and global alignment and recovers their fine-grained details through cross-attention in the decoder for reconstruction. As for the second-stage generation, we introduce MergeAR, which performs KV Cache compression for efficient raster-order prediction. Extensive experiments on ImageNet verify that MergeVQ as an AR generative model achieves competitive performance in both visual representation learning and image generation tasks while maintaining favorable token efficiency and inference speed. The code and model will be available at https://apexgen-x.github.io/MergeVQ.