MetaFaith: Faithful Natural Language Uncertainty Expression in LLMs

Gabrielle Kaili-May Liu, Gal Yona, Avi Caciularu, Idan Szpektor, Tim G. J. Rudner, Arman Cohan

2025-06-02

Summary

This paper talks about MetaFaith, a new method that helps large language models, like the ones behind chatbots, express how sure or unsure they are about their answers in a more accurate and honest way.

What's the problem?

The problem is that these AI models often sound very confident even when they're not actually sure about something, which can be misleading or cause people to trust their answers too much.

What's the solution?

The researchers introduced MetaFaith, which uses special prompts to encourage the AI to better express its uncertainty. This approach helps the model give more realistic and trustworthy signals about how much it actually knows.

Why it matters?

This is important because it makes AI systems safer and more reliable, helping people understand when they should double-check the information or not take the AI's answer at face value.

Abstract

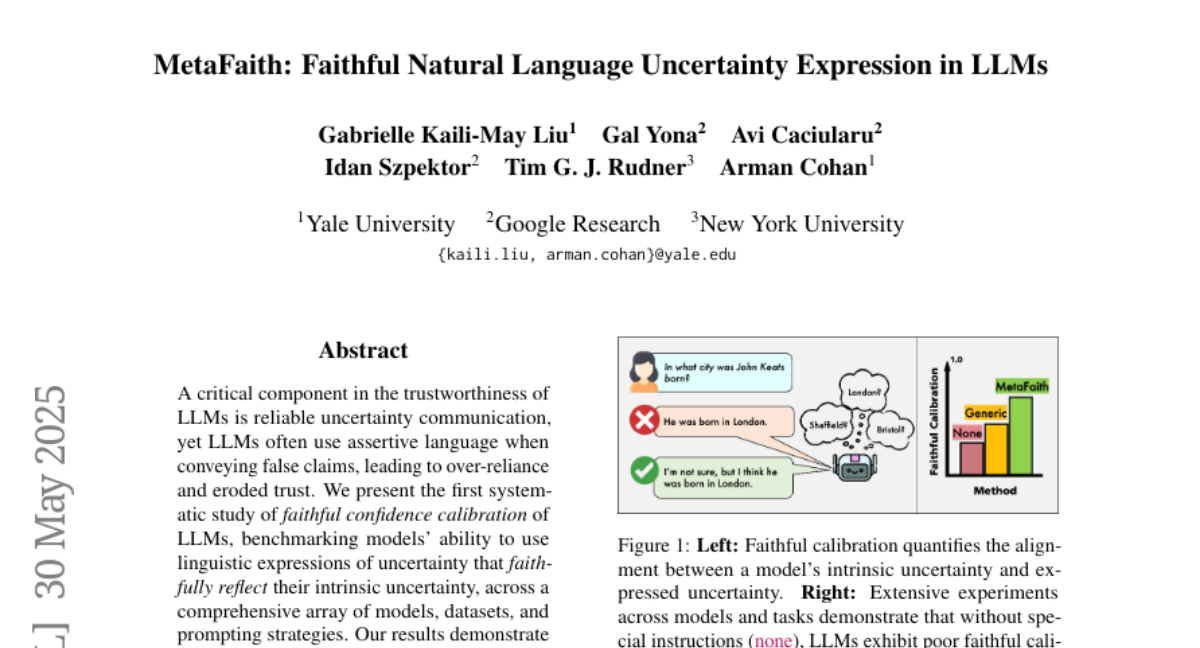

A study reveals that Large Language Models (LLMs) struggle with expressing uncertainty accurately and introduces MetaFaith, a prompt-based method that enhances their calibration significantly.