Mind2Web 2: Evaluating Agentic Search with Agent-as-a-Judge

Boyu Gou, Zanming Huang, Yuting Ning, Yu Gu, Michael Lin, Weijian Qi, Andrei Kopanev, Botao Yu, Bernal Jiménez Gutiérrez, Yiheng Shu, Chan Hee Song, Jiaman Wu, Shijie Chen, Hanane Nour Moussa, Tianshu Zhang, Jian Xie, Yifei Li, Tianci Xue, Zeyi Liao, Kai Zhang, Boyuan Zheng, Zhaowei Cai

2025-06-27

Summary

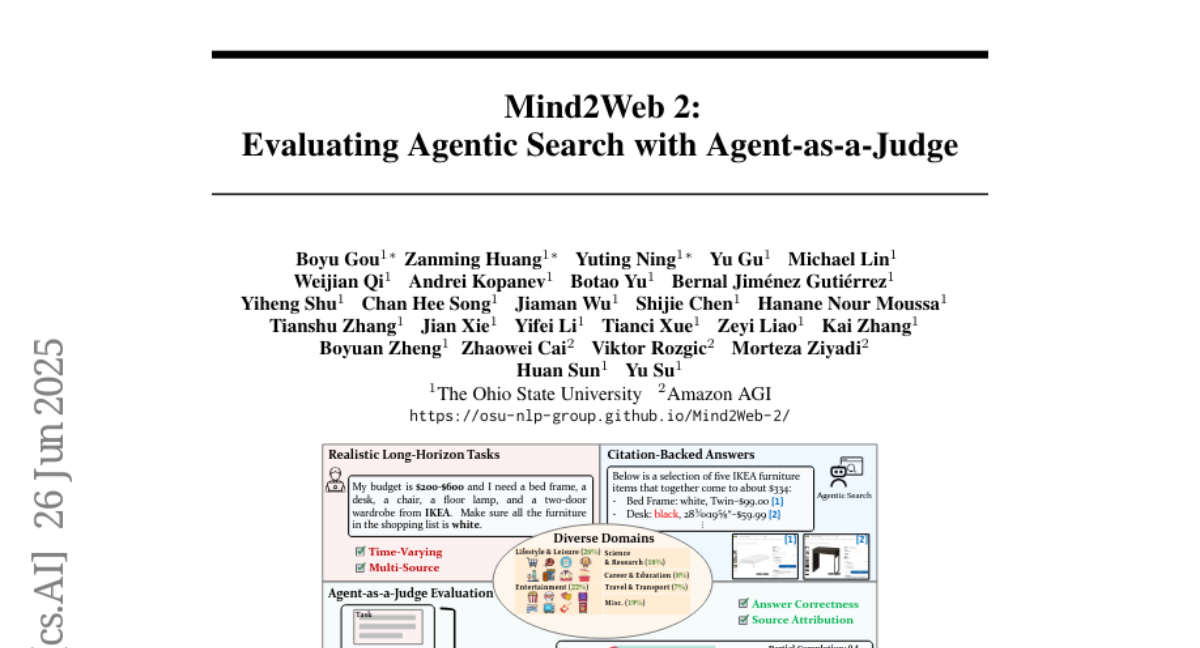

This paper talks about Mind2Web 2, a new way to test and measure how well AI systems that search the internet on their own can handle complex, long tasks by browsing, gathering, and combining information.

What's the problem?

The problem is that current testing methods for AI search systems don’t work well for big, realistic tasks that require looking through lots of changing information over time, and it’s hard to tell if the AI’s answers are really correct and if they come from reliable sources.

What's the solution?

The researchers created Mind2Web 2, a big set of 130 detailed tasks that mimic real search challenges, and designed a special evaluation system called Agent-as-a-Judge, where AI judges automatically check both the accuracy of the answers and whether the AI properly credits the sources, using a careful step-by-step scoring system.

Why it matters?

This matters because it helps build better and more trustworthy AI search systems by making sure they can handle difficult, real-world questions and give accurate, verifiable answers, improving how people use AI to find information.

Abstract

Mind2Web 2 benchmark evaluates agentic search systems with a suite of realistic, long-horizon tasks, introducing an Agent-as-a-Judge framework to assess accuracy and source attribution.