MixerMDM: Learnable Composition of Human Motion Diffusion Models

Pablo Ruiz-Ponce, German Barquero, Cristina Palmero, Sergio Escalera, José García-Rodríguez

2025-04-02

Summary

This paper explores a new way to create AI models that generate human movements based on text descriptions. It focuses on making these models more flexible and controllable.

What's the problem?

It's difficult to create AI models that can generate realistic human motion and also allow users to control specific aspects of the movement, like how different people interact.

What's the solution?

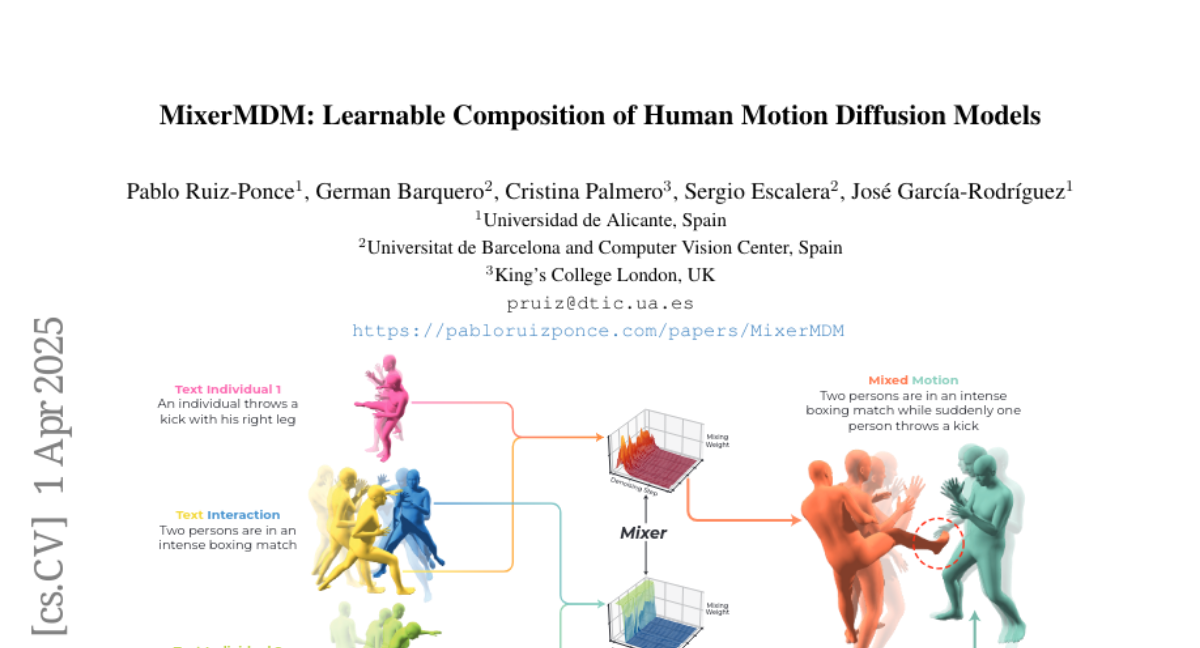

The researchers developed a system called MixerMDM that combines different AI models, each trained on specific types of movements. This allows users to mix and match these models to create complex and controllable motions.

Why it matters?

This work matters because it can lead to more realistic and controllable AI-generated animations, which could be useful in fields like gaming and virtual reality.

Abstract

Generating human motion guided by conditions such as textual descriptions is challenging due to the need for datasets with pairs of high-quality motion and their corresponding conditions. The difficulty increases when aiming for finer control in the generation. To that end, prior works have proposed to combine several motion diffusion models pre-trained on datasets with different types of conditions, thus allowing control with multiple conditions. However, the proposed merging strategies overlook that the optimal way to combine the generation processes might depend on the particularities of each pre-trained generative model and also the specific textual descriptions. In this context, we introduce MixerMDM, the first learnable model composition technique for combining pre-trained text-conditioned human motion diffusion models. Unlike previous approaches, MixerMDM provides a dynamic mixing strategy that is trained in an adversarial fashion to learn to combine the denoising process of each model depending on the set of conditions driving the generation. By using MixerMDM to combine single- and multi-person motion diffusion models, we achieve fine-grained control on the dynamics of every person individually, and also on the overall interaction. Furthermore, we propose a new evaluation technique that, for the first time in this task, measures the interaction and individual quality by computing the alignment between the mixed generated motions and their conditions as well as the capabilities of MixerMDM to adapt the mixing throughout the denoising process depending on the motions to mix.