MM-Ego: Towards Building Egocentric Multimodal LLMs

Hanrong Ye, Haotian Zhang, Erik Daxberger, Lin Chen, Zongyu Lin, Yanghao Li, Bowen Zhang, Haoxuan You, Dan Xu, Zhe Gan, Jiasen Lu, Yinfei Yang

2024-10-10

Summary

This paper discusses MM-Ego, a new model designed to understand videos from a first-person perspective, known as egocentric video understanding, by integrating multiple types of data.

What's the problem?

There is a lack of quality data and effective methods for teaching models how to interpret egocentric videos, which are recorded from a person's viewpoint. This makes it difficult for AI systems to recognize and remember important visual details in these videos.

What's the solution?

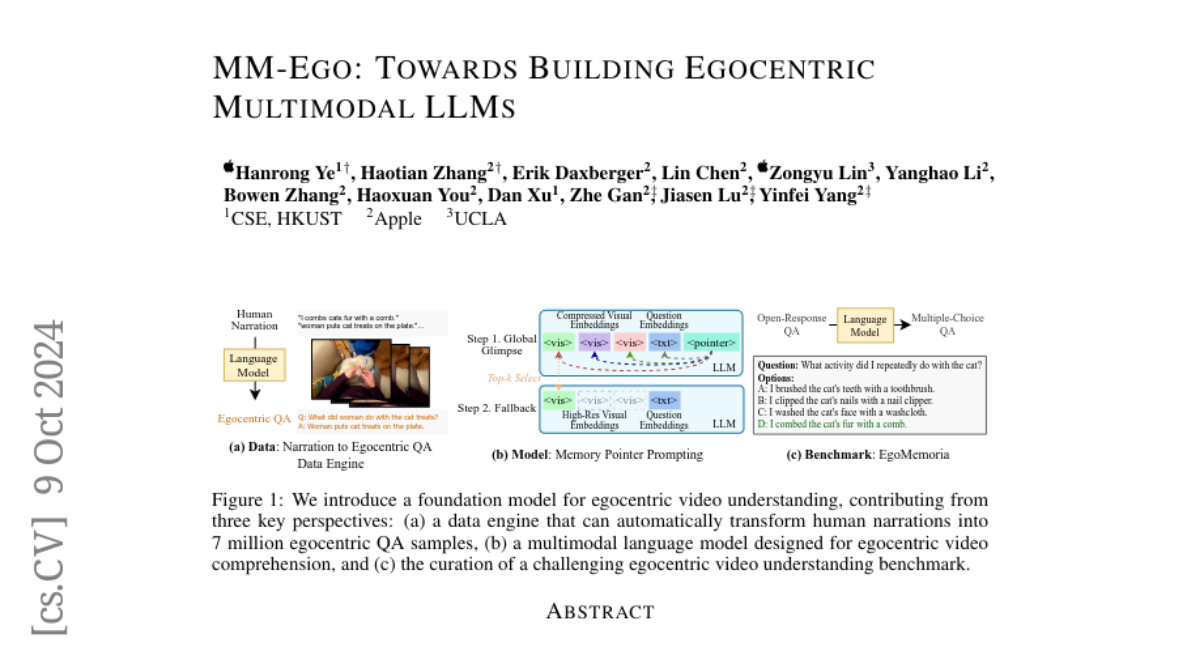

To address this, the researchers created a large dataset of 7 million question-and-answer pairs specifically for egocentric videos. They also developed a benchmark with 629 videos and over 7,000 questions to test the model's ability to recall visual information. Additionally, they introduced a new model architecture that uses a technique called 'Memory Pointer Prompting,' which helps the model focus on important parts of the video while generating responses. This approach allows the model to better understand long videos by first getting an overview and then zooming in on key details.

Why it matters?

This research is significant because it enhances how AI can learn from and understand videos taken from a first-person perspective. By improving the ability of models to analyze egocentric videos, MM-Ego could be applied in various fields such as virtual reality, surveillance, and personal video analysis, making AI more effective in real-world applications.

Abstract

This research aims to comprehensively explore building a multimodal foundation model for egocentric video understanding. To achieve this goal, we work on three fronts. First, as there is a lack of QA data for egocentric video understanding, we develop a data engine that efficiently generates 7M high-quality QA samples for egocentric videos ranging from 30 seconds to one hour long, based on human-annotated data. This is currently the largest egocentric QA dataset. Second, we contribute a challenging egocentric QA benchmark with 629 videos and 7,026 questions to evaluate the models' ability in recognizing and memorizing visual details across videos of varying lengths. We introduce a new de-biasing evaluation method to help mitigate the unavoidable language bias present in the models being evaluated. Third, we propose a specialized multimodal architecture featuring a novel "Memory Pointer Prompting" mechanism. This design includes a global glimpse step to gain an overarching understanding of the entire video and identify key visual information, followed by a fallback step that utilizes the key visual information to generate responses. This enables the model to more effectively comprehend extended video content. With the data, benchmark, and model, we successfully build MM-Ego, an egocentric multimodal LLM that shows powerful performance on egocentric video understanding.