MM-IFEngine: Towards Multimodal Instruction Following

Shengyuan Ding, Shenxi Wu, Xiangyu Zhao, Yuhang Zang, Haodong Duan, Xiaoyi Dong, Pan Zhang, Yuhang Cao, Dahua Lin, Jiaqi Wang

2025-04-11

Summary

This paper introduces MM-IFEngine, a new system designed to help AI models better follow complex instructions that involve both images and text. It focuses on making sure these models can understand exactly what users want and deliver precise results, even when the tasks are challenging or have strict requirements.

What's the problem?

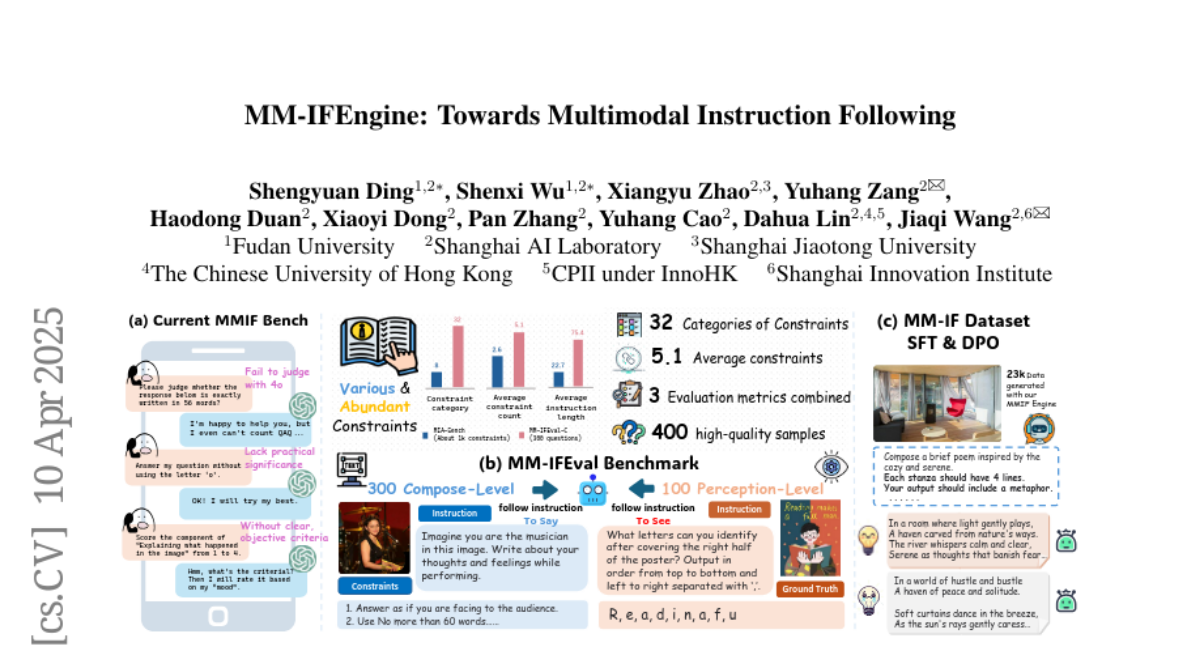

The main problem is that there isn't enough high-quality training data for teaching AI to follow detailed instructions involving multiple types of information, like images and text together. Existing tests for these skills are also too simple and don't do a good job of checking if the AI is really following instructions or meeting all the requirements.

What's the solution?

To fix this, the researchers created MM-IFEngine, which automatically generates a large and diverse set of image-instruction pairs for training. They also built a tougher benchmark called MM-IFEval to test how well AI models follow instructions, including both the details of what the response should look like and how well it understands the images. By training AI models with this new data and testing them with these harder benchmarks, the models got much better at following instructions accurately.

Why it matters?

This work is important because it helps make AI systems more reliable and useful when dealing with tasks that mix text and images, which is common in real life. With better training data and more challenging tests, AI can become better at understanding what people want, leading to smarter and more trustworthy technology for things like education, design, and customer support.

Abstract

The Instruction Following (IF) ability measures how well Multi-modal Large Language Models (MLLMs) understand exactly what users are telling them and whether they are doing it right. Existing multimodal instruction following training data is scarce, the benchmarks are simple with atomic instructions, and the evaluation strategies are imprecise for tasks demanding exact output constraints. To address this, we present MM-IFEngine, an effective pipeline to generate high-quality image-instruction pairs. Our MM-IFEngine pipeline yields large-scale, diverse, and high-quality training data MM-IFInstruct-23k, which is suitable for Supervised Fine-Tuning (SFT) and extended as MM-IFDPO-23k for Direct Preference Optimization (DPO). We further introduce MM-IFEval, a challenging and diverse multi-modal instruction-following benchmark that includes (1) both compose-level constraints for output responses and perception-level constraints tied to the input images, and (2) a comprehensive evaluation pipeline incorporating both rule-based assessment and judge model. We conduct SFT and DPO experiments and demonstrate that fine-tuning MLLMs on MM-IFInstruct-23k and MM-IFDPO-23k achieves notable gains on various IF benchmarks, such as MM-IFEval (+10.2%), MIA (+7.6%), and IFEval (+12.3%). The full data and evaluation code will be released on https://github.com/SYuan03/MM-IFEngine.