MME-Unify: A Comprehensive Benchmark for Unified Multimodal Understanding and Generation Models

Wulin Xie, Yi-Fan Zhang, Chaoyou Fu, Yang Shi, Bingyan Nie, Hongkai Chen, Zhang Zhang, Liang Wang, Tieniu Tan

2025-04-07

Summary

This paper talks about MME-Unify, a test to check how well AI models handle tasks that mix understanding and creating images/text, like solving geometry problems by drawing lines or editing photos based on instructions.

What's the problem?

Current tests don’t measure if AI can combine skills like understanding and creating content, and they compare different AI models unfairly because there’s no standard test.

What's the solution?

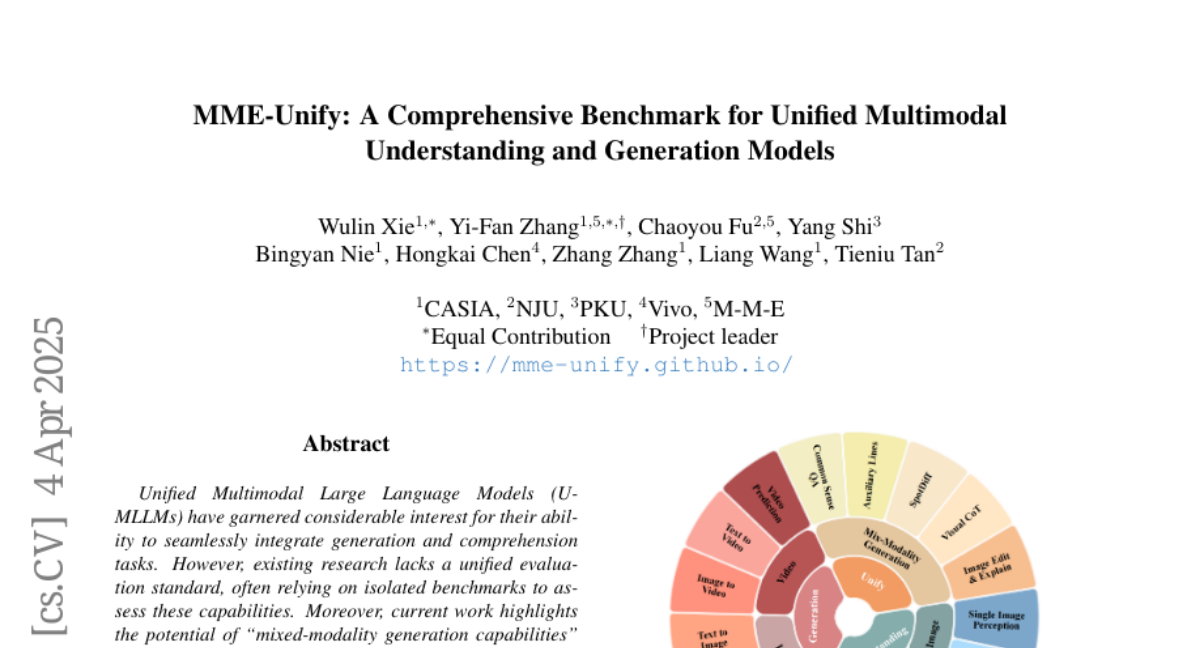

They made a new test with 30 mini-tasks from 12 existing challenges, plus 5 new ones that mix skills (like generating images while solving riddles), and tested top AI models to see where they struggle.

Why it matters?

This helps improve AI for real-world jobs like tutoring or design, where mixing skills is key, and shows which models need work to avoid mistakes in apps like photo editing or homework help.

Abstract

Existing MLLM benchmarks face significant challenges in evaluating Unified MLLMs (U-MLLMs) due to: 1) lack of standardized benchmarks for traditional tasks, leading to inconsistent comparisons; 2) absence of benchmarks for mixed-modality generation, which fails to assess multimodal reasoning capabilities. We present a comprehensive evaluation framework designed to systematically assess U-MLLMs. Our benchmark includes: Standardized Traditional Task Evaluation. We sample from 12 datasets, covering 10 tasks with 30 subtasks, ensuring consistent and fair comparisons across studies." 2. Unified Task Assessment. We introduce five novel tasks testing multimodal reasoning, including image editing, commonsense QA with image generation, and geometric reasoning. 3. Comprehensive Model Benchmarking. We evaluate 12 leading U-MLLMs, such as Janus-Pro, EMU3, VILA-U, and Gemini2-flash, alongside specialized understanding (e.g., Claude-3.5-Sonnet) and generation models (e.g., DALL-E-3). Our findings reveal substantial performance gaps in existing U-MLLMs, highlighting the need for more robust models capable of handling mixed-modality tasks effectively. The code and evaluation data can be found in https://mme-unify.github.io/.