MMHU: A Massive-Scale Multimodal Benchmark for Human Behavior Understanding

Renjie Li, Ruijie Ye, Mingyang Wu, Hao Frank Yang, Zhiwen Fan, Hezhen Hu, Zhengzhong Tu

2025-07-17

Summary

This paper talks about MMHU, a huge benchmark made to test how well AI can understand human behavior using data from many different sources, especially in autonomous driving scenarios.

What's the problem?

The problem is that teaching AI to recognize and predict human actions, like how people move or behave around cars, needs a lot of detailed and varied data, which has been lacking or incomplete in previous studies.

What's the solution?

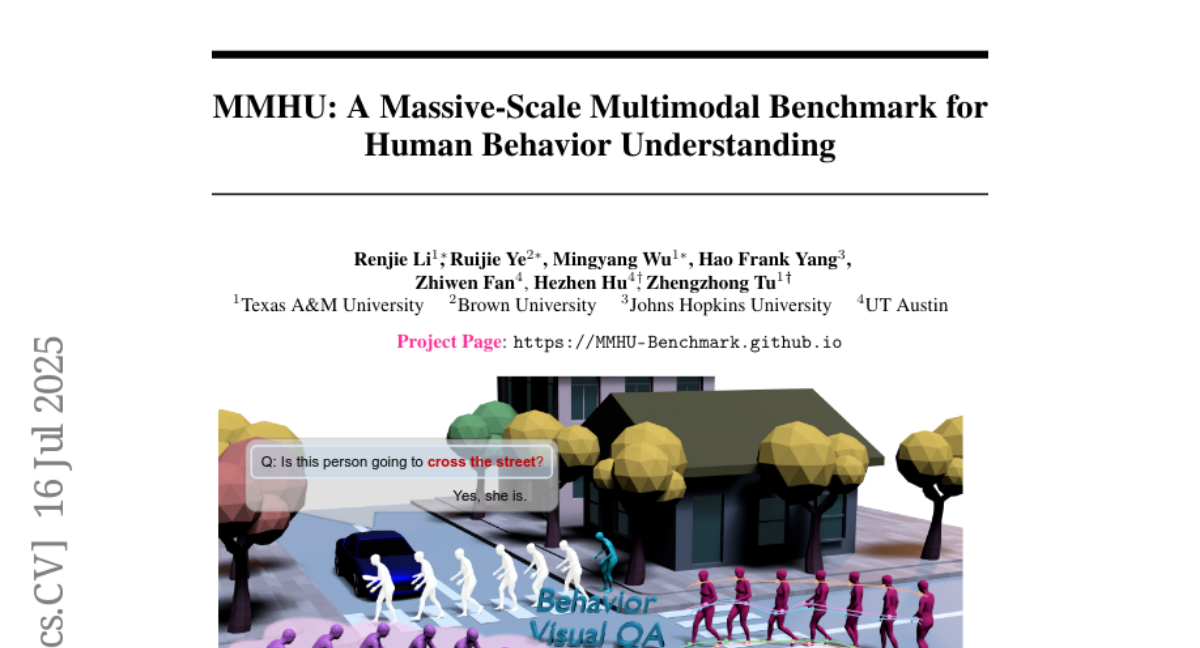

The authors created MMHU by collecting and annotating a massive amount of data from various places, including videos and sensor information, to cover many aspects of human behavior. They included tasks like predicting where people will move and answering questions about human behavior based on the data, providing a comprehensive way to test AI models.

Why it matters?

This matters because better understanding of human behavior is critical for making autonomous cars safer and more trustworthy, helping AI systems make smarter decisions when interacting with people on the road.

Abstract

A large-scale benchmark, MMHU, is proposed for human behavior analysis in autonomous driving, featuring rich annotations and diverse data sources, and benchmarking various tasks including motion prediction and behavior question answering.