MMIG-Bench: Towards Comprehensive and Explainable Evaluation of Multi-Modal Image Generation Models

Hang Hua, Ziyun Zeng, Yizhi Song, Yunlong Tang, Liu He, Daniel Aliaga, Wei Xiong, Jiebo Luo

2025-05-27

Summary

This paper talks about MMIG-Bench, a new tool that helps researchers test and understand how well AI models can create images based on both text descriptions and example pictures.

What's the problem?

The problem is that it's hard to fairly judge how good these multi-modal image generation models really are, since they need to be checked on many different levels, like how well they match the text, how realistic the images look, and how closely they follow the example images. Without a detailed and organized way to measure these things, it's tough to compare models or see where they need improvement.

What's the solution?

The authors developed MMIG-Bench, a benchmark that uses a variety of tests and measurements to evaluate these models from different angles, including basic image details, overall image quality, and how well the generated images match the given text and reference images. This makes it much easier to see exactly how each model performs and why.

Why it matters?

This is important because it gives researchers and developers a clear and thorough way to judge and improve AI image generators, which can lead to better, more creative, and more reliable tools for art, design, and many other uses.

Abstract

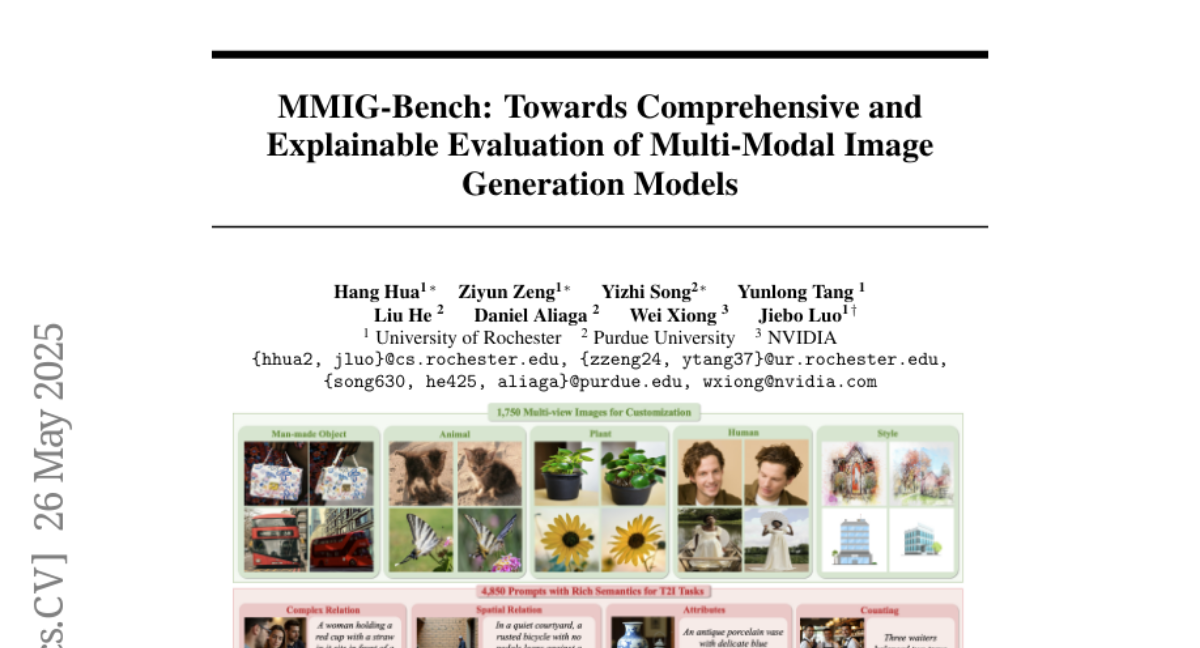

A comprehensive benchmark, MMIG-Bench, evaluates multi-modal image generators using text prompts and reference images, providing detailed insights through low-level, mid-level, and high-level metrics.