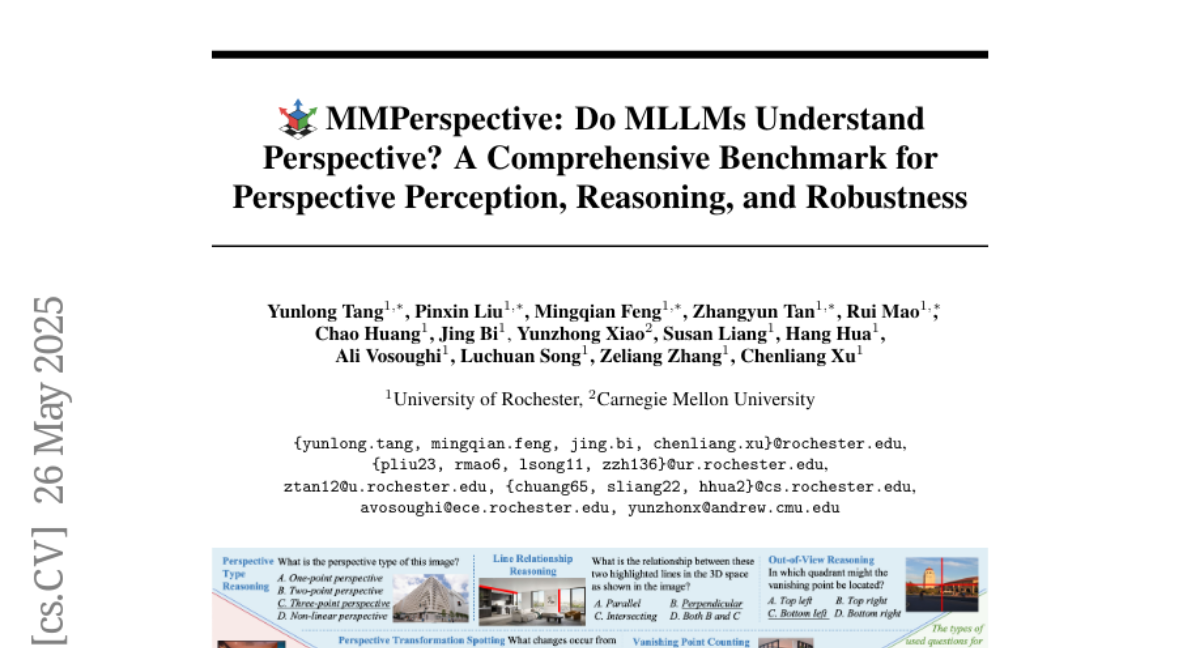

MMPerspective: Do MLLMs Understand Perspective? A Comprehensive Benchmark for Perspective Perception, Reasoning, and Robustness

Yunlong Tang, Pinxin Liu, Mingqian Feng, Zhangyun Tan, Rui Mao, Chao Huang, Jing Bi, Yunzhong Xiao, Susan Liang, Hang Hua, Ali Vosoughi, Luchuan Song, Zeliang Zhang, Chenliang Xu

2025-05-28

Summary

This paper talks about MMPerspective, a new set of tests that checks how well big AI models can understand and reason about perspective in images and geometry.

What's the problem?

The problem is that even though large language models are good at many tasks, they often struggle with understanding how things look from different viewpoints or how objects relate to each other in space, which is important for truly understanding images and geometry.

What's the solution?

To address this, the researchers created MMPerspective, which tests AI models on their ability to perceive and reason about perspective and spatial relationships. The tests also show that using a step-by-step thinking process, called chain-of-thought prompting, can help these models do better.

Why it matters?

This is important because if AI can get better at understanding perspective, it will be more useful for things like analyzing photos, helping with design, or even understanding scenes in the real world, making it a more powerful tool for everyone.

Abstract

MMPerspective evaluates large language models' understanding of perspective geometry through tasks involving perception, reasoning, and robustness, revealing limitations in spatial reasoning and the benefits of chain-of-thought prompting.