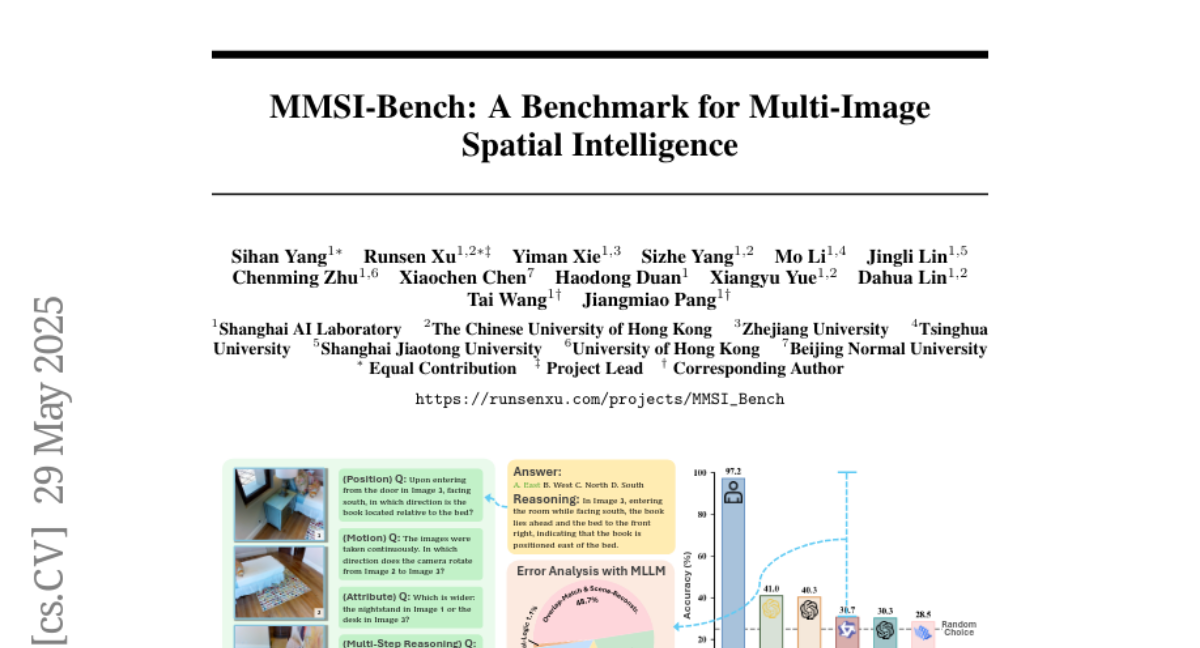

MMSI-Bench: A Benchmark for Multi-Image Spatial Intelligence

Sihan Yang, Runsen Xu, Yiman Xie, Sizhe Yang, Mo Li, Jingli Lin, Chenming Zhu, Xiaochen Chen, Haodong Duan, Xiangyu Yue, Dahua Lin, Tai Wang, Jiangmiao Pang

2025-05-30

Summary

This paper talks about MMSI-Bench, a new test designed to see how well AI models can understand and reason about the relationships between multiple images, especially when it comes to figuring out where things are and how they relate in space.

What's the problem?

The problem is that even though AI models can look at pictures and answer questions about them, they are still much worse than humans at understanding how different images connect or what the spatial relationships are between objects in those images.

What's the solution?

The researchers created MMSI-Bench, a special set of questions and tasks that challenge AI models to use spatial intelligence across several images, and then compared the AI's performance to how well humans do on the same tasks.

Why it matters?

This is important because it shows exactly where AI still falls short when it comes to understanding complex visual information, which can help scientists improve these models so they can be more useful for things like robotics, navigation, or any situation where understanding space and relationships between objects is important.

Abstract

MMSI-Bench, a VQA benchmark for multi-image spatial intelligence, reveals significant gaps in multimodal large language models' performance compared to human accuracy.