Molar: Multimodal LLMs with Collaborative Filtering Alignment for Enhanced Sequential Recommendation

Yucong Luo, Qitao Qin, Hao Zhang, Mingyue Cheng, Ruiran Yan, Kefan Wang, Jie Ouyang

2024-12-27

Summary

This paper talks about Molar, a new system designed to improve how recommendations are made by combining different types of data and collaborative filtering techniques.

What's the problem?

Traditional recommendation systems often struggle to provide accurate suggestions because they mainly rely on text data and ignore other types of information, like images or user behavior patterns. This limitation can lead to less personalized and relevant recommendations, especially in complex scenarios where user preferences change over time.

What's the solution?

To address these issues, the authors developed Molar, a multimodal large language model (MLLM) that integrates various types of content (like text and images) along with user identification information. Molar uses a two-part system: the Multimodal Item Representation Model (MIRM) to create detailed item profiles from different data types, and the Dynamic User Embedding Generator (DUEG) to understand and predict user preferences. Additionally, it employs a post-alignment mechanism to combine insights from both content-based and ID-based models, ensuring that recommendations are more accurate and personalized.

Why it matters?

This research is important because it enhances the effectiveness of recommendation systems, which are crucial for platforms like streaming services, online shopping, and social media. By using a more comprehensive approach that considers multiple data types and user behaviors, Molar can provide better recommendations, helping users discover content that truly interests them.

Abstract

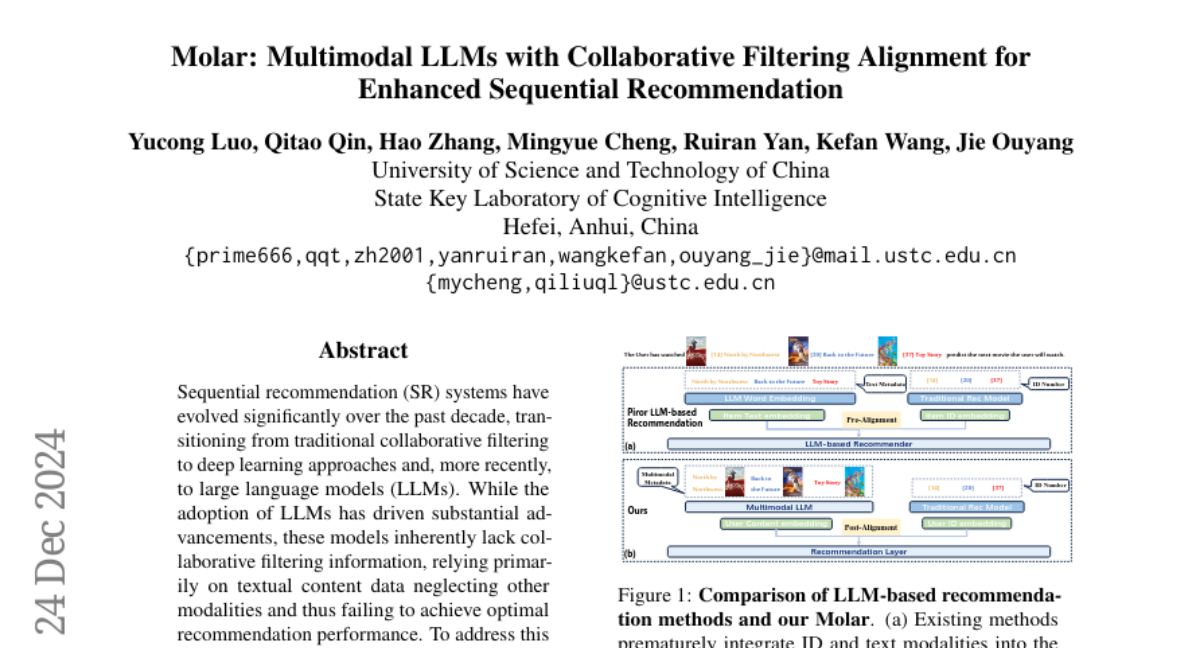

Sequential recommendation (SR) systems have evolved significantly over the past decade, transitioning from traditional collaborative filtering to deep learning approaches and, more recently, to large language models (LLMs). While the adoption of LLMs has driven substantial advancements, these models inherently lack collaborative filtering information, relying primarily on textual content data neglecting other modalities and thus failing to achieve optimal recommendation performance. To address this limitation, we propose Molar, a Multimodal large language sequential recommendation framework that integrates multiple content modalities with ID information to capture collaborative signals effectively. Molar employs an MLLM to generate unified item representations from both textual and non-textual data, facilitating comprehensive multimodal modeling and enriching item embeddings. Additionally, it incorporates collaborative filtering signals through a post-alignment mechanism, which aligns user representations from content-based and ID-based models, ensuring precise personalization and robust performance. By seamlessly combining multimodal content with collaborative filtering insights, Molar captures both user interests and contextual semantics, leading to superior recommendation accuracy. Extensive experiments validate that Molar significantly outperforms traditional and LLM-based baselines, highlighting its strength in utilizing multimodal data and collaborative signals for sequential recommendation tasks. The source code is available at https://anonymous.4open.science/r/Molar-8B06/.