MonoPlace3D: Learning 3D-Aware Object Placement for 3D Monocular Detection

Rishubh Parihar, Srinjay Sarkar, Sarthak Vora, Jogendra Kundu, R. Venkatesh Babu

2025-04-11

Summary

This paper talks about MonoPlace3D, a new system that helps AI models better detect and understand where objects are in 3D from just a single camera image. The focus is on making the placement of objects in training data much more realistic, which is important for teaching the AI to recognize and locate things accurately in real-world scenes.

What's the problem?

The main problem is that most AI models for 3D object detection struggle because there isn't enough variety in real-world training data, and it's especially hard to create fake training data where objects are placed in ways that look natural and make sense in a 3D scene. Most methods just make the objects look real, but don't pay enough attention to where and how they are positioned, which limits how well the models learn.

What's the solution?

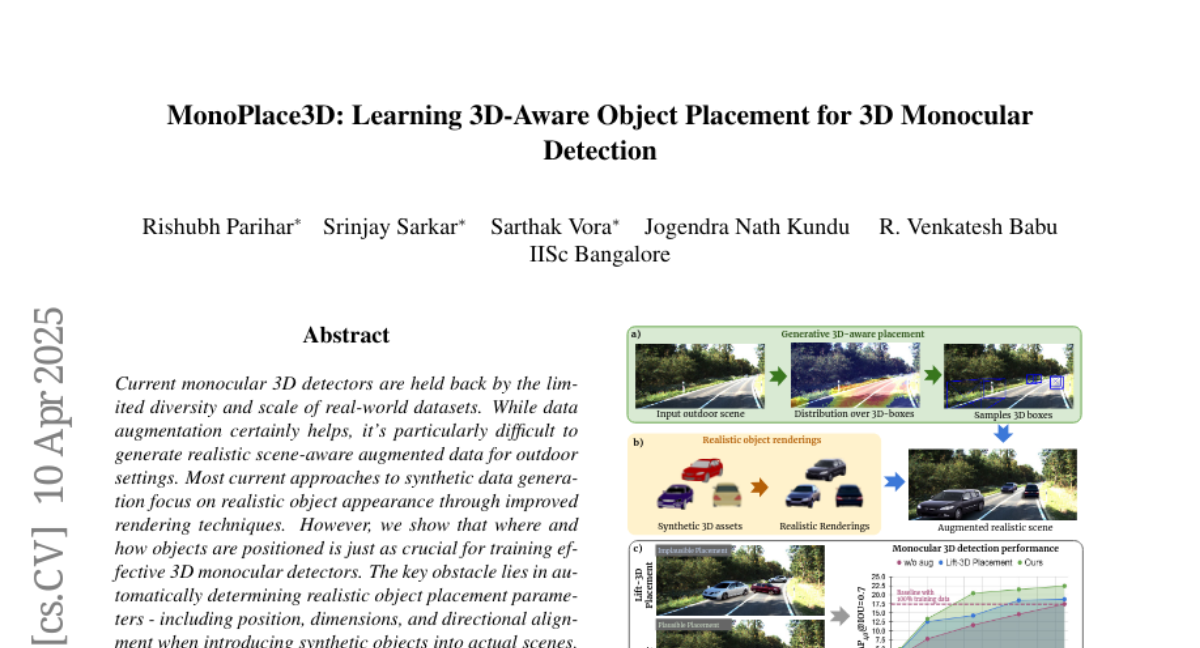

MonoPlace3D solves this by learning how to place objects in realistic positions, sizes, and directions based on the 3D layout of a scene. It uses a special network to figure out where objects should go in a given background, then renders synthetic objects and blends them into the scene so they look like they belong there. This approach creates much more believable training data, which helps the AI learn better, even with less real data.

Why it matters?

This work matters because it makes it possible to train better 3D object detection models without needing tons of real-world data. By improving how fake objects are placed in scenes, MonoPlace3D helps AI systems become more accurate and efficient, which is important for things like self-driving cars, robotics, and any technology that needs to understand the 3D world from cameras.

Abstract

Current monocular 3D detectors are held back by the limited diversity and scale of real-world datasets. While data augmentation certainly helps, it's particularly difficult to generate realistic scene-aware augmented data for outdoor settings. Most current approaches to synthetic data generation focus on realistic object appearance through improved rendering techniques. However, we show that where and how objects are positioned is just as crucial for training effective 3D monocular detectors. The key obstacle lies in automatically determining realistic object placement parameters - including position, dimensions, and directional alignment when introducing synthetic objects into actual scenes. To address this, we introduce MonoPlace3D, a novel system that considers the 3D scene content to create realistic augmentations. Specifically, given a background scene, MonoPlace3D learns a distribution over plausible 3D bounding boxes. Subsequently, we render realistic objects and place them according to the locations sampled from the learned distribution. Our comprehensive evaluation on two standard datasets KITTI and NuScenes, demonstrates that MonoPlace3D significantly improves the accuracy of multiple existing monocular 3D detectors while being highly data efficient.