Monte Carlo Diffusion for Generalizable Learning-Based RANSAC

Jiale Wang, Chen Zhao, Wei Ke, Tong Zhang

2025-03-13

Summary

This paper talks about making AI models that find patterns in messy data (like blurry photos) work better in real-world situations by training them with added noise and randomness.

What's the problem?

Current AI models trained to spot patterns get confused by unexpected data they haven’t seen before, like new types of camera glitches or lighting changes.

What's the solution?

The method adds controlled noise to training data and mixes in random variations, teaching the AI to handle messy real-world conditions better.

Why it matters?

This improves AI tools for tasks like self-driving cars or 3D mapping, making them more reliable when dealing with unpredictable real-world noise.

Abstract

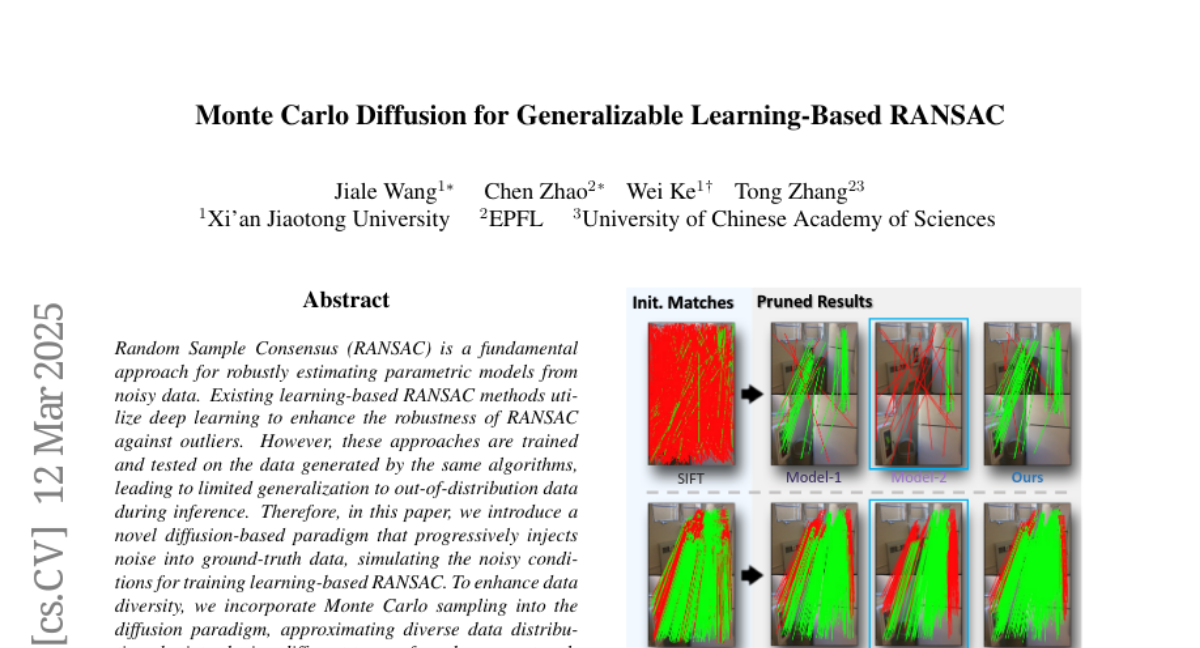

Random Sample Consensus (RANSAC) is a fundamental approach for robustly estimating parametric models from noisy data. Existing learning-based RANSAC methods utilize deep learning to enhance the robustness of RANSAC against outliers. However, these approaches are trained and tested on the data generated by the same algorithms, leading to limited generalization to out-of-distribution data during inference. Therefore, in this paper, we introduce a novel diffusion-based paradigm that progressively injects noise into ground-truth data, simulating the noisy conditions for training learning-based RANSAC. To enhance data diversity, we incorporate Monte Carlo sampling into the diffusion paradigm, approximating diverse data distributions by introducing different types of randomness at multiple stages. We evaluate our approach in the context of feature matching through comprehensive experiments on the ScanNet and MegaDepth datasets. The experimental results demonstrate that our Monte Carlo diffusion mechanism significantly improves the generalization ability of learning-based RANSAC. We also develop extensive ablation studies that highlight the effectiveness of key components in our framework.