More Documents, Same Length: Isolating the Challenge of Multiple Documents in RAG

Shahar Levy, Nir Mazor, Lihi Shalmon, Michael Hassid, Gabriel Stanovsky

2025-03-13

Summary

This paper talks about testing AI models that use multiple documents to answer questions, showing that more documents make it harder for AI to find the right answers, even when the total text length stays the same.

What's the problem?

When AI systems pull info from many documents to answer questions, they get confused and perform worse, but it’s unclear if this is just because of longer text or something specific to handling multiple sources.

What's the solution?

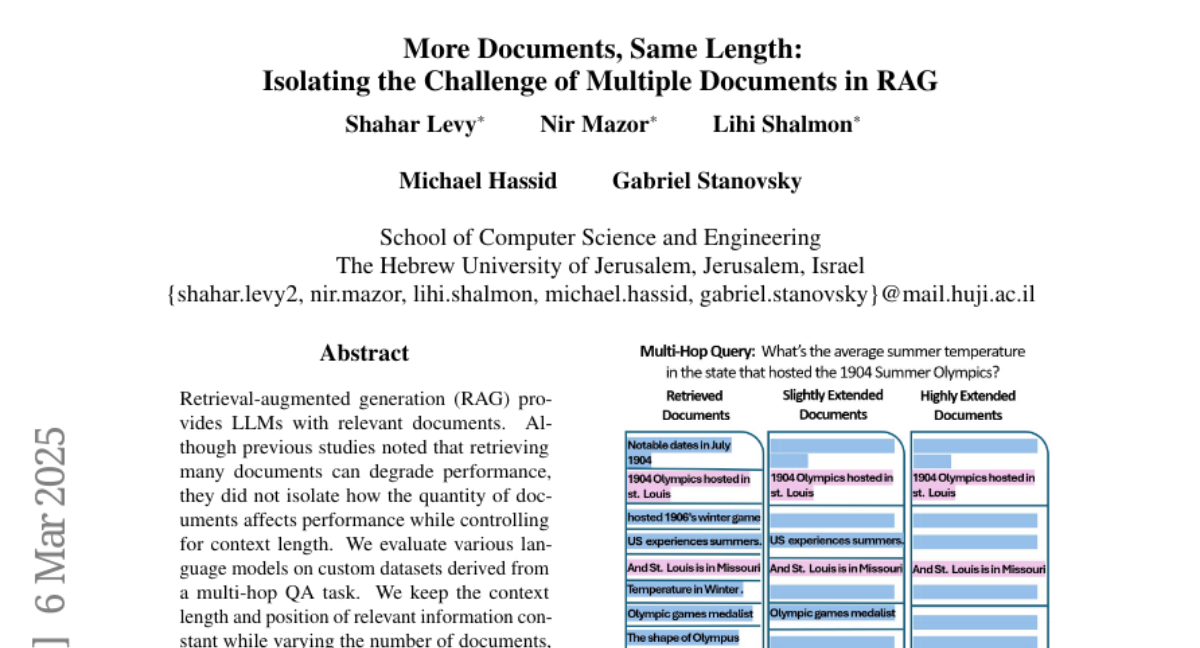

Researchers created tests where AI answers questions using the same amount of text but split into different numbers of documents, proving that more documents alone make the task harder.

Why it matters?

This helps improve AI tools for research or customer service by showing they need better ways to handle multiple sources, not just longer texts.

Abstract

Retrieval-augmented generation (RAG) provides LLMs with relevant documents. Although previous studies noted that retrieving many documents can degrade performance, they did not isolate how the quantity of documents affects performance while controlling for context length. We evaluate various language models on custom datasets derived from a multi-hop QA task. We keep the context length and position of relevant information constant while varying the number of documents, and find that increasing the document count in RAG settings poses significant challenges for LLMs. Additionally, our results indicate that processing multiple documents is a separate challenge from handling long contexts. We also make the datasets and code available: https://github.com/shaharl6000/MoreDocsSameLen .