MOSPA: Human Motion Generation Driven by Spatial Audio

Shuyang Xu, Zhiyang Dou, Mingyi Shi, Liang Pan, Leo Ho, Jingbo Wang, Yuan Liu, Cheng Lin, Yuexin Ma, Wenping Wang, Taku Komura

2025-07-17

Summary

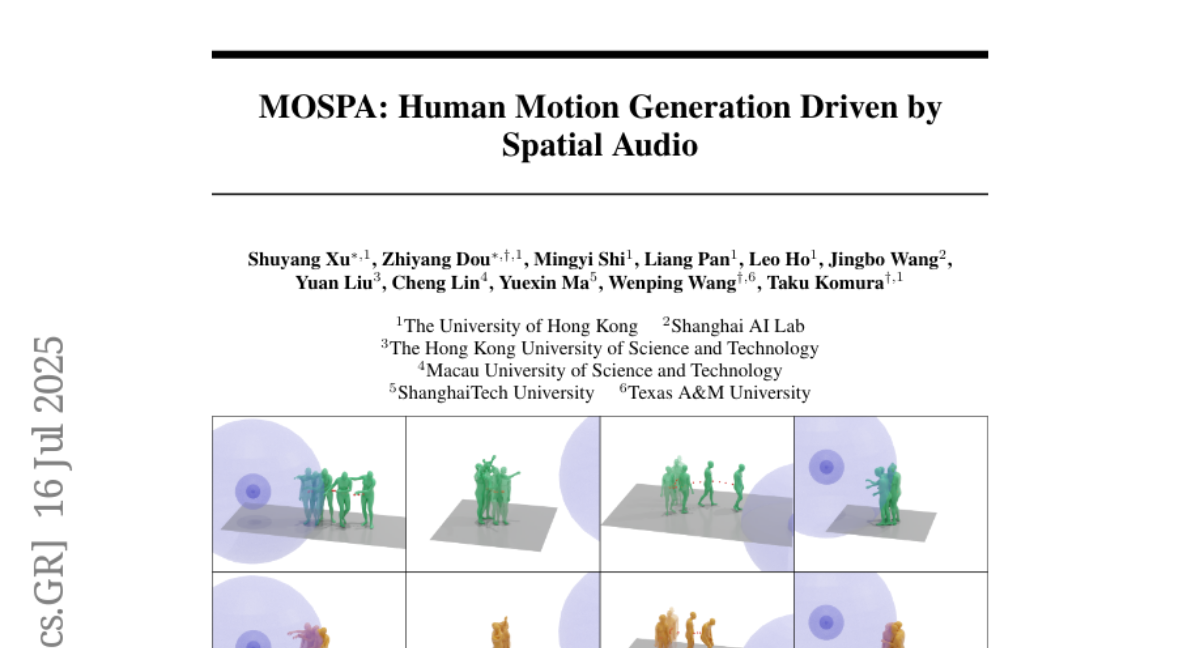

This paper talks about MOSPA, a new AI system that creates realistic human movements based on spatial audio, which is the way sounds come from different locations around a person.

What's the problem?

The problem is that it is hard for virtual characters or animations to move naturally in response to sounds that come from specific directions because previous models mostly ignored the spatial aspects of audio.

What's the solution?

The authors collected a new dataset called SAM with lots of examples of human motions paired with spatial audio, then developed MOSPA, a diffusion-based generative model that learns how to combine this spatial sound information with body movements, allowing it to produce realistic and diverse human motions based on where sounds come from.

Why it matters?

This matters because it helps make virtual humans and animations respond more naturally to their environment, improving realism in games, virtual reality, and other interactive media that use sound to guide movement.

Abstract

A diffusion-based generative framework, MOSPA, is introduced to model human motion in response to spatial audio, achieving state-of-the-art performance using the newly created SAM dataset.