MotionBooth: Motion-Aware Customized Text-to-Video Generation

Jianzong Wu, Xiangtai Li, Yanhong Zeng, Jiangning Zhang, Qianyu Zhou, Yining Li, Yunhai Tong, Kai Chen

2024-06-26

Summary

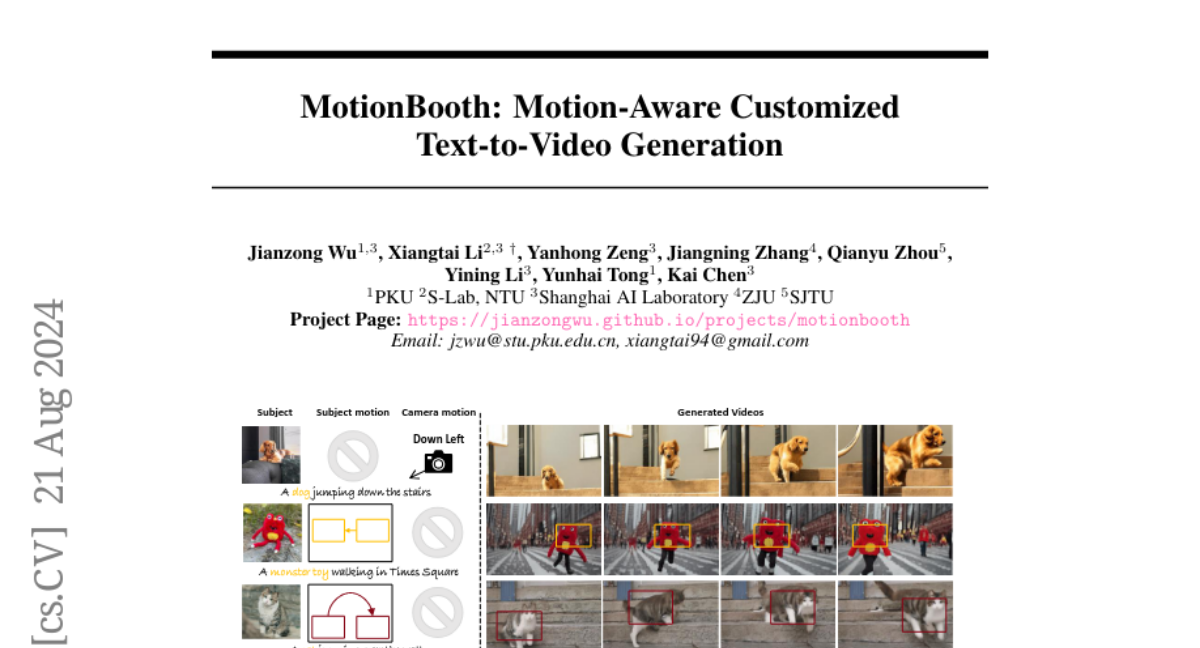

This paper introduces MotionBooth, a new system that allows users to create animated videos of customized subjects with precise control over how both the object and the camera move. It enhances the process of generating videos from a few images of a specific object.

What's the problem?

Creating animated videos that accurately represent specific objects can be challenging. Traditional methods may not effectively capture the details and movements of customized subjects, leading to less realistic animations. Additionally, managing how the camera moves in relation to the subject can complicate the video creation process.

What's the solution?

MotionBooth addresses these challenges by fine-tuning a text-to-video model using just a few images of the object. The system uses advanced techniques like subject region loss and video preservation loss to improve how well it learns the object's features. It also employs cross-attention map manipulation to control the subject's motion and a novel latent shift module to manage camera movements without needing extensive training. This allows for more accurate and visually appealing animations.

Why it matters?

This research is important because it provides a powerful tool for creating high-quality animated videos that maintain the appearance and movements of customized subjects. This technology can be useful in various fields, such as film production, video game development, and virtual reality, making it easier for creators to produce engaging content that looks realistic.

Abstract

In this work, we present MotionBooth, an innovative framework designed for animating customized subjects with precise control over both object and camera movements. By leveraging a few images of a specific object, we efficiently fine-tune a text-to-video model to capture the object's shape and attributes accurately. Our approach presents subject region loss and video preservation loss to enhance the subject's learning performance, along with a subject token cross-attention loss to integrate the customized subject with motion control signals. Additionally, we propose training-free techniques for managing subject and camera motions during inference. In particular, we utilize cross-attention map manipulation to govern subject motion and introduce a novel latent shift module for camera movement control as well. MotionBooth excels in preserving the appearance of subjects while simultaneously controlling the motions in generated videos. Extensive quantitative and qualitative evaluations demonstrate the superiority and effectiveness of our method. Our project page is at https://jianzongwu.github.io/projects/motionbooth