MotionCanvas: Cinematic Shot Design with Controllable Image-to-Video Generation

Jinbo Xing, Long Mai, Cusuh Ham, Jiahui Huang, Aniruddha Mahapatra, Chi-Wing Fu, Tien-Tsin Wong, Feng Liu

2025-02-07

Summary

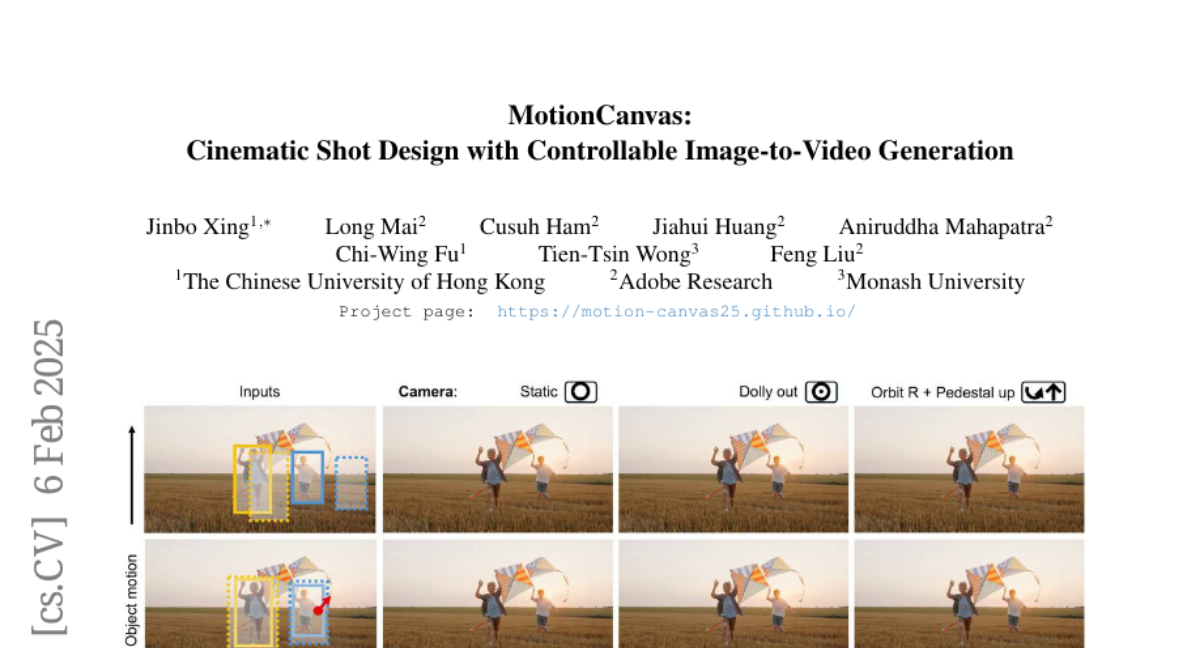

This paper talks about MotionCanvas, a new tool that helps turn still images into videos by letting users control how the camera moves and how objects in the scene move, just like in real filmmaking.

What's the problem?

Current systems for turning images into videos don't give users an easy way to control both camera movements and object motions at the same time. It's also hard for these systems to understand and use the motion information that users want to create realistic animations.

What's the solution?

The researchers created MotionCanvas, which lets users control both camera and object movements in a way that understands the 3D space of the scene. It takes the user's instructions for movement and turns them into signals that video creation models can understand and use. MotionCanvas does this without needing expensive 3D training data, making it more accessible.

Why it matters?

This matters because it could change how people create digital content, especially in film and video production. It makes it easier for anyone to create professional-looking video shots from still images, potentially saving time and money in creative industries. It could also lead to new ways of editing videos and creating special effects, opening up more possibilities for storytelling and visual art.

Abstract

This paper presents a method that allows users to design cinematic video shots in the context of image-to-video generation. Shot design, a critical aspect of filmmaking, involves meticulously planning both camera movements and object motions in a scene. However, enabling intuitive shot design in modern image-to-video generation systems presents two main challenges: first, effectively capturing user intentions on the motion design, where both camera movements and scene-space object motions must be specified jointly; and second, representing motion information that can be effectively utilized by a video diffusion model to synthesize the image animations. To address these challenges, we introduce MotionCanvas, a method that integrates user-driven controls into image-to-video (I2V) generation models, allowing users to control both object and camera motions in a scene-aware manner. By connecting insights from classical computer graphics and contemporary video generation techniques, we demonstrate the ability to achieve 3D-aware motion control in I2V synthesis without requiring costly 3D-related training data. MotionCanvas enables users to intuitively depict scene-space motion intentions, and translates them into spatiotemporal motion-conditioning signals for video diffusion models. We demonstrate the effectiveness of our method on a wide range of real-world image content and shot-design scenarios, highlighting its potential to enhance the creative workflows in digital content creation and adapt to various image and video editing applications.