MotionStreamer: Streaming Motion Generation via Diffusion-based Autoregressive Model in Causal Latent Space

Lixing Xiao, Shunlin Lu, Huaijin Pi, Ke Fan, Liang Pan, Yueer Zhou, Ziyong Feng, Xiaowei Zhou, Sida Peng, Jingbo Wang

2025-03-21

Summary

This paper introduces a new way to create realistic human motion in a continuous stream, like when a character is moving in a video game, based on text descriptions.

What's the problem?

Existing methods for generating motion from text have limitations. Some can't create a continuous stream of movement, while others have delays or accumulate errors over time.

What's the solution?

The researchers developed a system called MotionStreamer that uses a special type of AI model to generate realistic motion in a smooth and continuous way, based on the text.

Why it matters?

This work matters because it can lead to more realistic and interactive characters in video games, virtual reality, and other applications where continuous motion is important.

Abstract

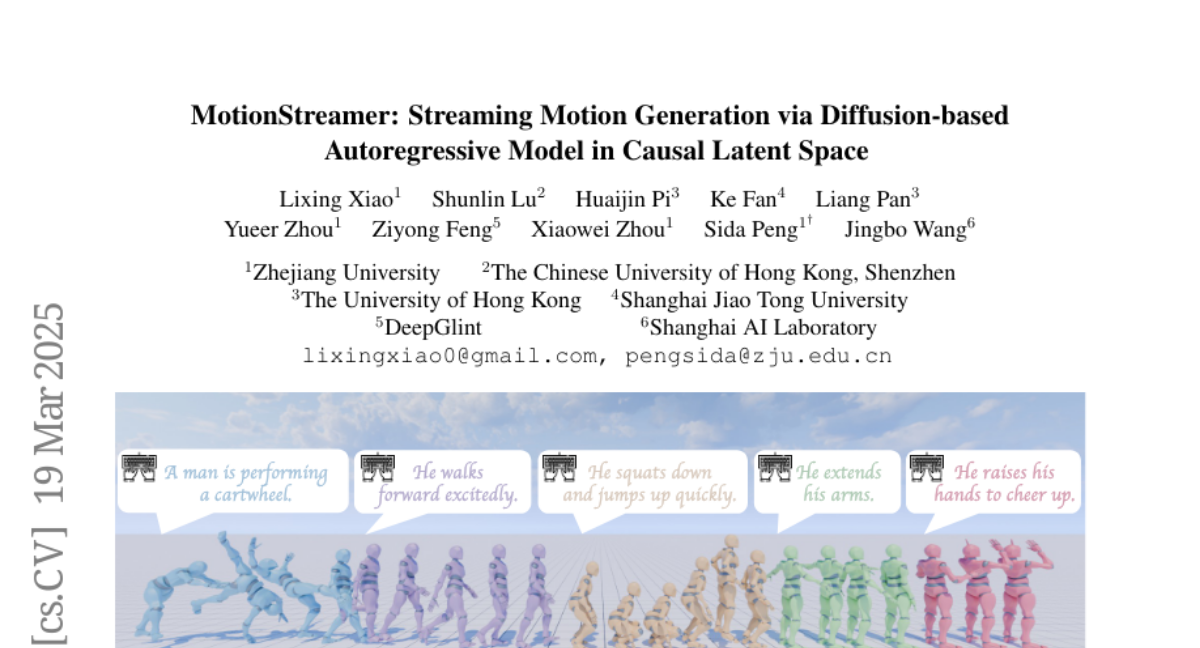

This paper addresses the challenge of text-conditioned streaming motion generation, which requires us to predict the next-step human pose based on variable-length historical motions and incoming texts. Existing methods struggle to achieve streaming motion generation, e.g., diffusion models are constrained by pre-defined motion lengths, while GPT-based methods suffer from delayed response and error accumulation problem due to discretized non-causal tokenization. To solve these problems, we propose MotionStreamer, a novel framework that incorporates a continuous causal latent space into a probabilistic autoregressive model. The continuous latents mitigate information loss caused by discretization and effectively reduce error accumulation during long-term autoregressive generation. In addition, by establishing temporal causal dependencies between current and historical motion latents, our model fully utilizes the available information to achieve accurate online motion decoding. Experiments show that our method outperforms existing approaches while offering more applications, including multi-round generation, long-term generation, and dynamic motion composition. Project Page: https://zju3dv.github.io/MotionStreamer/