MS4UI: A Dataset for Multi-modal Summarization of User Interface Instructional Videos

Yuan Zang, Hao Tan, Seunghyun Yoon, Franck Dernoncourt, Jiuxiang Gu, Kushal Kafle, Chen Sun, Trung Bui

2025-06-17

Summary

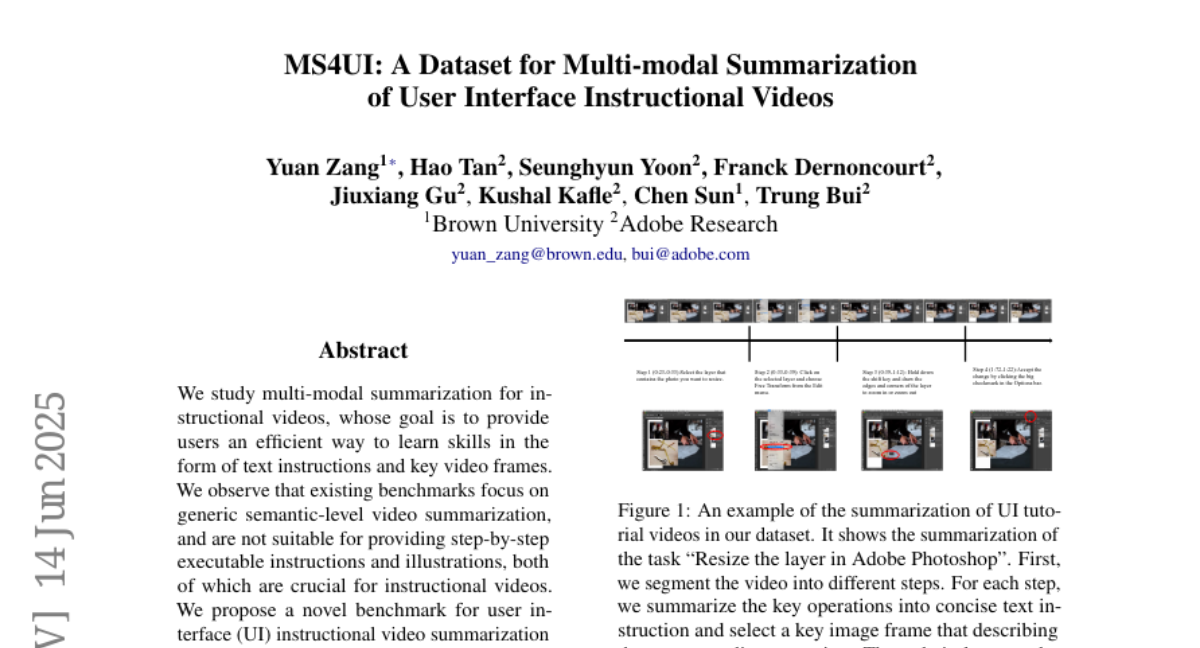

This paper talks about MS4UI, a new dataset and benchmark made for summarizing user interface (UI) instructional videos using multiple types of information like images and text. It focuses on creating step-by-step instructions from the videos and picking out the key video frames that best show each step clearly.

What's the problem?

The problem is that UI instructional videos are complex because they show actions on a screen with both visual and spoken details, but existing tools struggle to turn these videos into easy-to-follow summaries with clear instructions and the right visuals. Without good summaries, it can be hard for users to learn how to use software or devices efficiently from watching these videos.

What's the solution?

The solution was to create MS4UI, a large collection of UI instructional videos annotated to help AI models learn how to summarize these videos properly. It provides examples of how to break down the video into clear, executable steps with matching key frames, helping guide the AI to produce summaries that are both informative and easy to understand.

Why it matters?

This matters because having better summaries of UI instructional videos makes it easier for people to learn new software or technology quickly without watching long videos. It can improve how tutorials are made and help build smarter systems that assist users by providing clear, concise, and visual instructions.

Abstract

A novel benchmark and dataset are proposed for multi-modal summarization of UI instructional videos, addressing the need for step-by-step executable instructions and key video frames.