MuChoMusic: Evaluating Music Understanding in Multimodal Audio-Language Models

Benno Weck, Ilaria Manco, Emmanouil Benetos, Elio Quinton, George Fazekas, Dmitry Bogdanov

2024-08-05

Summary

This paper presents MuChoMusic, a new benchmark designed to evaluate how well multimodal audio-language models understand music. It includes a set of multiple-choice questions based on various music tracks to assess the models' knowledge and reasoning skills.

What's the problem?

Evaluating how well AI models understand music is challenging because there hasn't been a standardized way to test their abilities. Current methods often fail to accurately measure how these models interpret music-related information, especially when combining audio and language processing.

What's the solution?

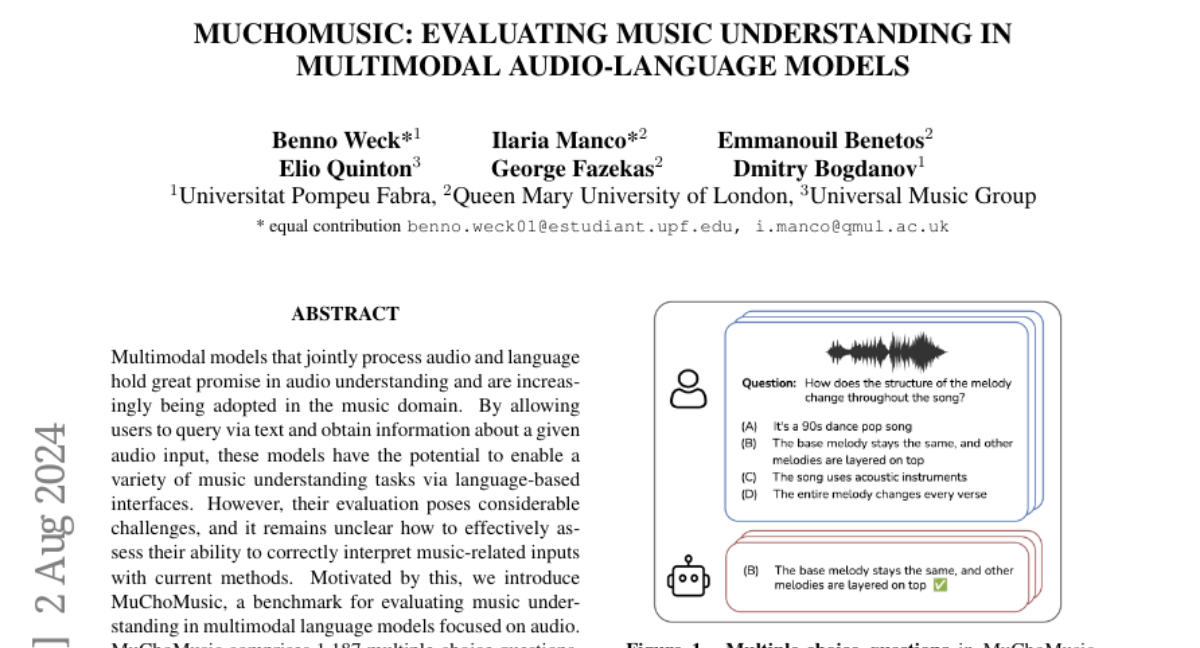

To address this issue, the authors created MuChoMusic, which consists of 1,187 multiple-choice questions validated by human reviewers, based on 644 music tracks from different genres. The questions are designed to test both knowledge of musical concepts and reasoning abilities. By using this benchmark, the researchers evaluated five different audio-language models and found that many struggled with understanding music, particularly due to an over-reliance on language rather than integrating audio information effectively.

Why it matters?

This research is important because it helps improve the way we assess AI models in the music domain. By providing a structured way to evaluate music understanding, MuChoMusic can lead to better AI systems that can engage with users about music more naturally, such as recommending songs or analyzing musical pieces. This can enhance applications in music education, recommendation systems, and more.

Abstract

Multimodal models that jointly process audio and language hold great promise in audio understanding and are increasingly being adopted in the music domain. By allowing users to query via text and obtain information about a given audio input, these models have the potential to enable a variety of music understanding tasks via language-based interfaces. However, their evaluation poses considerable challenges, and it remains unclear how to effectively assess their ability to correctly interpret music-related inputs with current methods. Motivated by this, we introduce MuChoMusic, a benchmark for evaluating music understanding in multimodal language models focused on audio. MuChoMusic comprises 1,187 multiple-choice questions, all validated by human annotators, on 644 music tracks sourced from two publicly available music datasets, and covering a wide variety of genres. Questions in the benchmark are crafted to assess knowledge and reasoning abilities across several dimensions that cover fundamental musical concepts and their relation to cultural and functional contexts. Through the holistic analysis afforded by the benchmark, we evaluate five open-source models and identify several pitfalls, including an over-reliance on the language modality, pointing to a need for better multimodal integration. Data and code are open-sourced.