MUG-Eval: A Proxy Evaluation Framework for Multilingual Generation Capabilities in Any Language

Seyoung Song, Seogyeong Jeong, Eunsu Kim, Jiho Jin, Dongkwan Kim, Jay Shin, Alice Oh

2025-05-23

Summary

This paper talks about a new way to test how well large language models can generate text in different languages, using a system called MUG-Eval.

What's the problem?

The problem is that it's hard to fairly and easily measure how good language models are at creating text in many languages, especially without relying on special tools or being limited to just a few languages.

What's the solution?

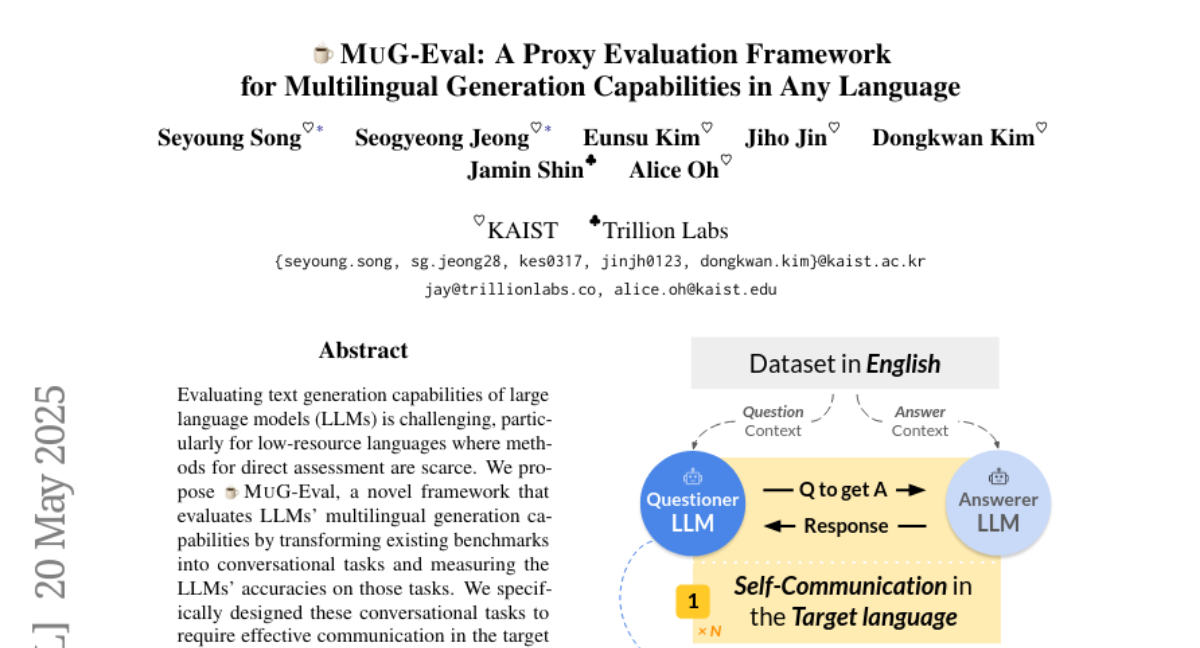

The researchers created MUG-Eval, which turns standard language tests into conversations. This approach doesn't depend on any specific language or extra natural language processing tools, and it still matches up well with the results from more traditional benchmarks.

Why it matters?

This is important because it makes it much easier to check if language models are truly good at working with lots of different languages, helping to make AI more accessible and useful for people all over the world.

Abstract

MUG-Eval assesses LLMs' multilingual generation by transforming benchmarks into conversational tasks, offering a language-independent and NLP tool-free method that correlates well with established benchmarks.