Multimodal Language Modeling for High-Accuracy Single Cell Transcriptomics Analysis and Generation

Yaorui Shi, Jiaqi Yang, Sihang Li, Junfeng Fang, Xiang Wang, Zhiyuan Liu, Yang Zhang

2025-03-13

Summary

This paper talks about scMMGPT, a smart AI tool that combines two types of models to analyze and describe single-cell data, making it easier for scientists to understand and work with tiny biological details.

What's the problem?

Existing AI models can’t handle both text and single-cell data at the same time, making it hard to describe cells in words or generate cells based on text descriptions accurately.

What's the solution?

The researchers built scMMGPT by connecting a cell-focused AI model with a text-focused one, using special bridges to let them share information, and trained it on 27 million cells to improve its understanding of both data types.

Why it matters?

This helps biologists analyze cells faster and more accurately, speeding up medical research and making it easier to discover new treatments or understand diseases.

Abstract

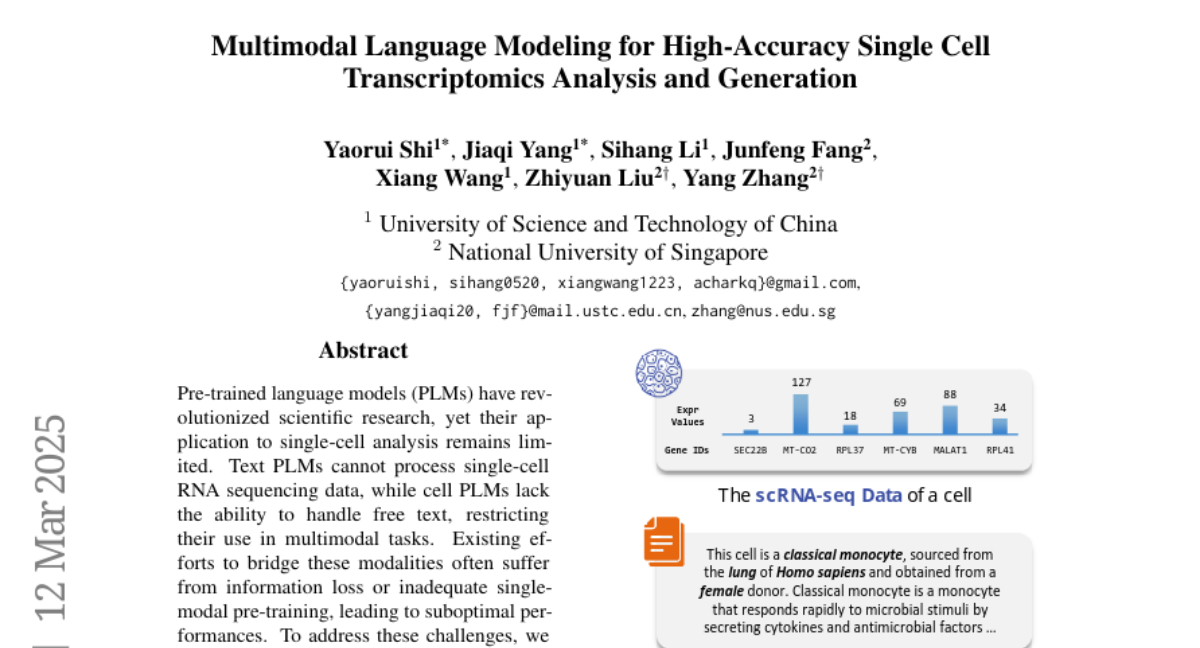

Pre-trained language models (PLMs) have revolutionized scientific research, yet their application to single-cell analysis remains limited. Text PLMs cannot process single-cell RNA sequencing data, while cell PLMs lack the ability to handle free text, restricting their use in multimodal tasks. Existing efforts to bridge these modalities often suffer from information loss or inadequate single-modal pre-training, leading to suboptimal performances. To address these challenges, we propose Single-Cell MultiModal Generative Pre-trained Transformer (scMMGPT), a unified PLM for joint cell and text modeling. scMMGPT effectively integrates the state-of-the-art cell and text PLMs, facilitating cross-modal knowledge sharing for improved performance. To bridge the text-cell modality gap, scMMGPT leverages dedicated cross-modal projectors, and undergoes extensive pre-training on 27 million cells -- the largest dataset for multimodal cell-text PLMs to date. This large-scale pre-training enables scMMGPT to excel in joint cell-text tasks, achieving an 84\% relative improvement of textual discrepancy for cell description generation, 20.5\% higher accuracy for cell type annotation, and 4\% improvement in k-NN accuracy for text-conditioned pseudo-cell generation, outperforming baselines.