Multimodal Long Video Modeling Based on Temporal Dynamic Context

Haoran Hao, Jiaming Han, Yiyuan Zhang, Xiangyu Yue

2025-04-16

Summary

This paper talks about a new way to help AI understand really long videos by keeping important details from both the pictures and sounds, using a method called Temporal Dynamic Context.

What's the problem?

The problem is that when AI tries to process long videos, it often loses important information because it has to compress so much data, especially when dealing with both video and audio. Existing methods usually just group similar-looking frames together, which can miss out on the actual meaning and changes happening in the video.

What's the solution?

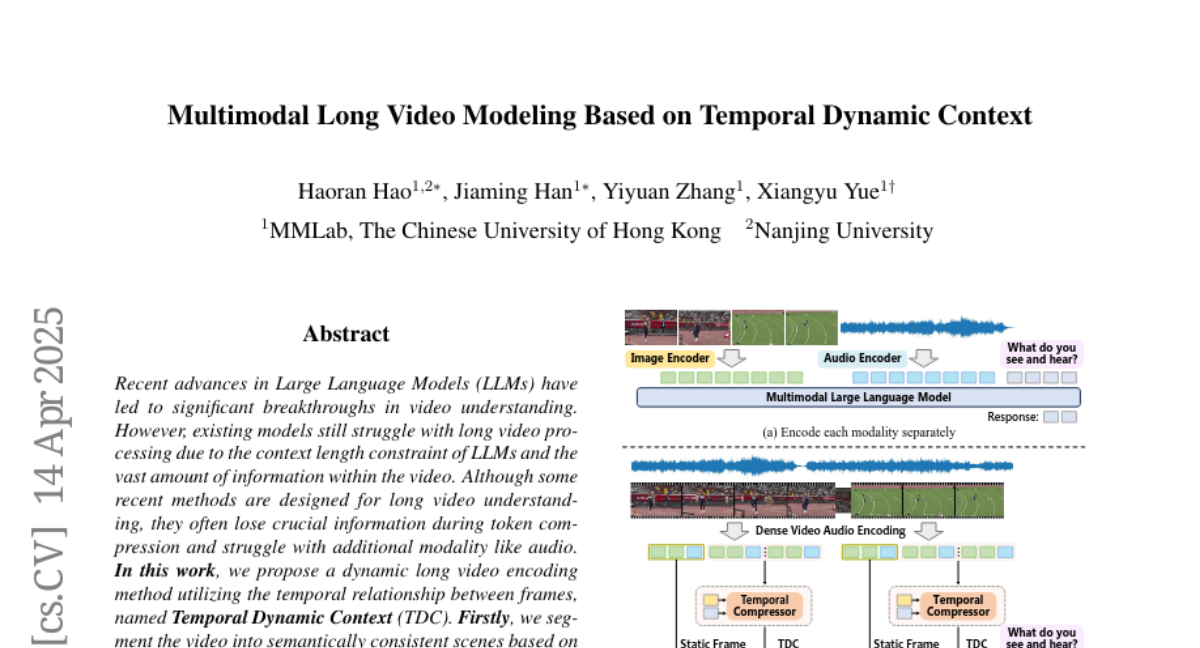

The researchers created a system that first breaks the video into meaningful scenes based on how the frames change over time. For each scene, they keep the first frame as a detailed reference and then use a special transformer to compress the rest of the frames, focusing on the differences and important changes. They also use a chain-of-thought approach for super long videos, letting the AI reason step by step through different parts of the video before coming up with a final answer.

Why it matters?

This matters because it lets AI models handle much longer and more complicated videos without losing the important stuff, making them better at understanding movies, lectures, or any real-world video that goes on for a long time.

Abstract

A method for dynamic long video encoding uses Temporal Dynamic Context to compress tokens while retaining information, and uses a chain-of-thought strategy for extremely long videos.