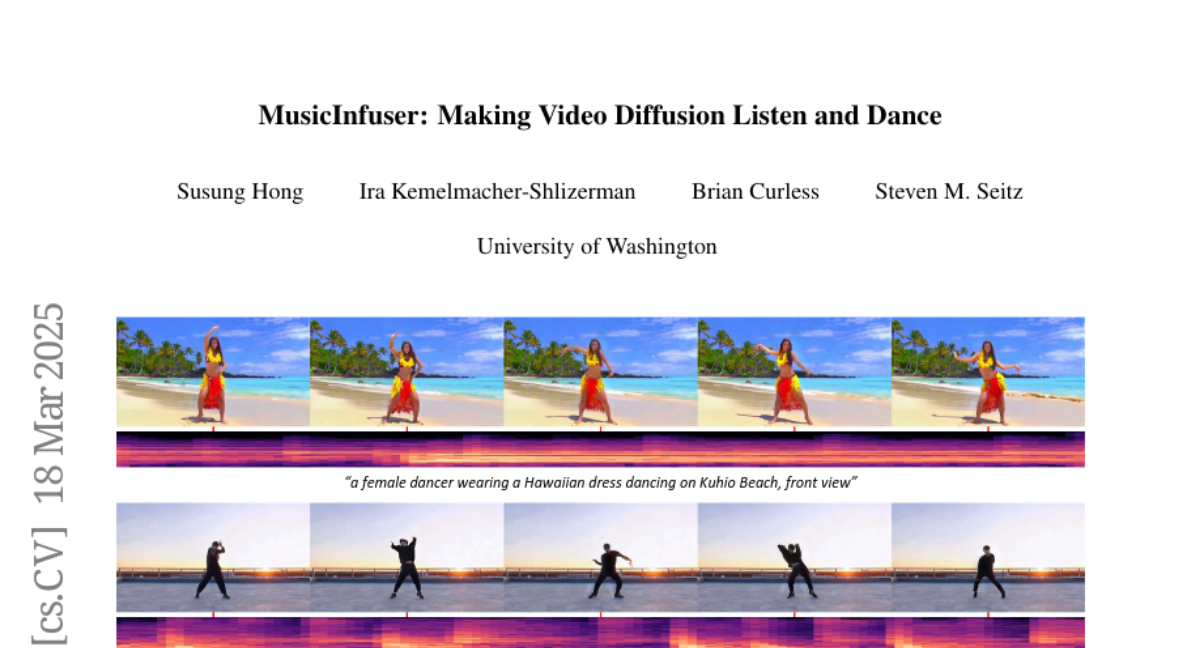

MusicInfuser: Making Video Diffusion Listen and Dance

Susung Hong, Ira Kemelmacher-Shlizerman, Brian Curless, Steven M. Seitz

2025-03-20

Summary

This paper is about creating AI that can generate videos of people dancing in sync with music.

What's the problem?

Making AI that can create realistic and good-looking dance videos that match the music is a difficult task.

What's the solution?

The researchers developed a system called MusicInfuser that adapts existing video AI models to listen to music and generate dance videos that fit the beat.

Why it matters?

This work matters because it shows a new way to create AI that can generate creative content like dance videos, opening up possibilities for new forms of entertainment and expression.

Abstract

We introduce MusicInfuser, an approach for generating high-quality dance videos that are synchronized to a specified music track. Rather than attempting to design and train a new multimodal audio-video model, we show how existing video diffusion models can be adapted to align with musical inputs by introducing lightweight music-video cross-attention and a low-rank adapter. Unlike prior work requiring motion capture data, our approach fine-tunes only on dance videos. MusicInfuser achieves high-quality music-driven video generation while preserving the flexibility and generative capabilities of the underlying models. We introduce an evaluation framework using Video-LLMs to assess multiple dimensions of dance generation quality. The project page and code are available at https://susunghong.github.io/MusicInfuser.