MVLLaVA: An Intelligent Agent for Unified and Flexible Novel View Synthesis

Hanyu Jiang, Jian Xue, Xing Lan, Guohong Hu, Ke Lu

2024-09-12

Summary

This paper talks about MVLLaVA, an intelligent agent designed to create new views of images based on user instructions, using advanced models that combine multiple techniques.

What's the problem?

Generating new views of an object or scene from a single image can be difficult. Traditional methods often struggle to adapt to different types of input, such as text descriptions or changes in viewpoint, making it hard to create flexible and accurate visual representations.

What's the solution?

To solve this problem, the authors developed MVLLaVA, which integrates several multi-view diffusion models with a large multimodal model called LLaVA. This setup allows the agent to process different types of inputs—like a single image or a description—and generate new views accordingly. They created specific instruction templates to help guide the model during training, which improved its ability to follow user requests and generate high-quality images.

Why it matters?

This research is important because it enhances the ability of AI to create realistic and varied images from simple instructions. By providing a flexible platform for novel view synthesis, MVLLaVA can be used in various applications, including virtual reality, gaming, and design, making it easier for users to visualize concepts and ideas.

Abstract

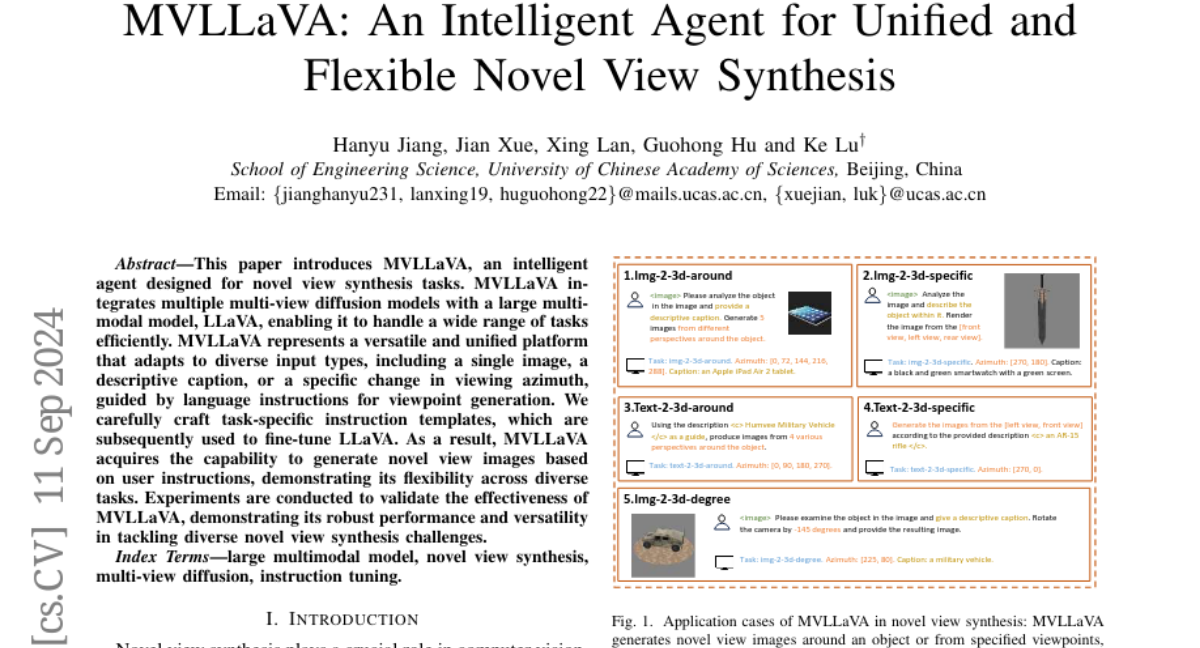

This paper introduces MVLLaVA, an intelligent agent designed for novel view synthesis tasks. MVLLaVA integrates multiple multi-view diffusion models with a large multimodal model, LLaVA, enabling it to handle a wide range of tasks efficiently. MVLLaVA represents a versatile and unified platform that adapts to diverse input types, including a single image, a descriptive caption, or a specific change in viewing azimuth, guided by language instructions for viewpoint generation. We carefully craft task-specific instruction templates, which are subsequently used to fine-tune LLaVA. As a result, MVLLaVA acquires the capability to generate novel view images based on user instructions, demonstrating its flexibility across diverse tasks. Experiments are conducted to validate the effectiveness of MVLLaVA, demonstrating its robust performance and versatility in tackling diverse novel view synthesis challenges.