Neural LightRig: Unlocking Accurate Object Normal and Material Estimation with Multi-Light Diffusion

Zexin He, Tengfei Wang, Xin Huang, Xingang Pan, Ziwei Liu

2024-12-13

Summary

This paper talks about Neural LightRig, a new method for accurately estimating the surface details and materials of objects from a single image by using multiple lighting conditions.

What's the problem?

Estimating the geometry and materials of objects from just one image is difficult because there isn't enough information to make accurate predictions. Traditional methods often fail to capture important details due to the limitations of how light interacts with surfaces, leading to inaccurate results.

What's the solution?

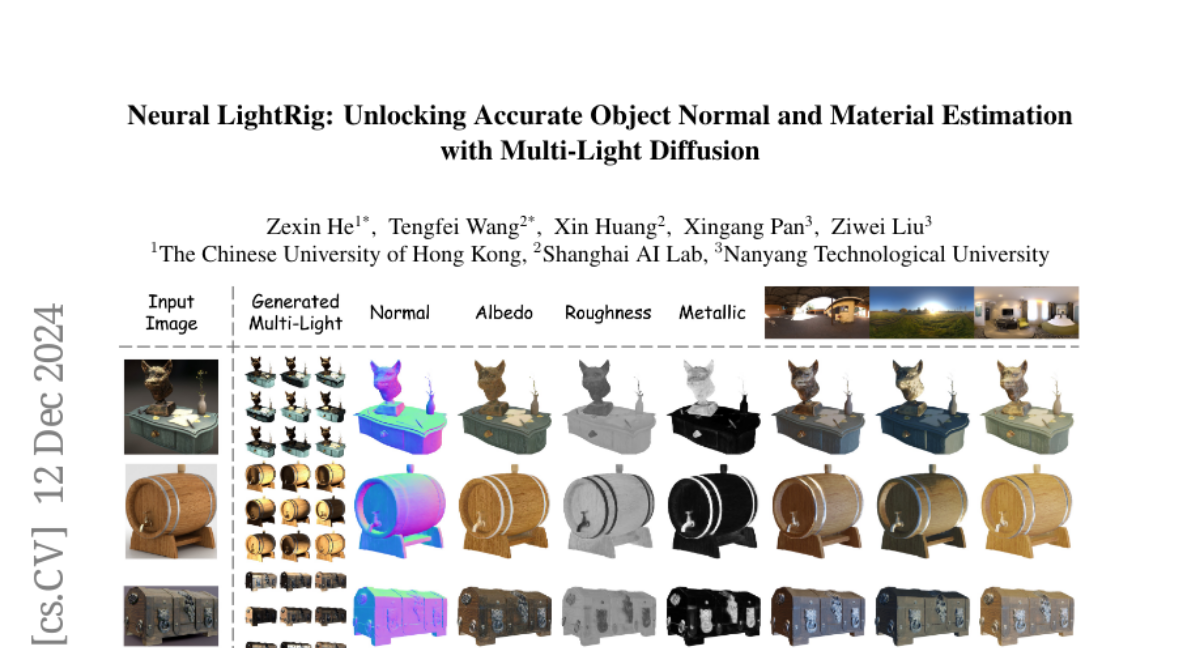

Neural LightRig improves this process by using a technique that incorporates multiple lighting conditions. It first builds a model that generates several images of the same object under different lighting setups. Then, it uses these images to train a neural network that predicts the surface normals (the direction of the surface) and material properties (like color and texture) more accurately. This approach allows for better handling of the complexities involved in understanding how light interacts with different materials.

Why it matters?

This research is significant because it enables more precise modeling of objects in various applications, such as computer graphics, virtual reality, and robotics. By improving how we can estimate object properties from limited data, Neural LightRig can enhance the realism and accuracy of digital representations in many fields.

Abstract

Recovering the geometry and materials of objects from a single image is challenging due to its under-constrained nature. In this paper, we present Neural LightRig, a novel framework that boosts intrinsic estimation by leveraging auxiliary multi-lighting conditions from 2D diffusion priors. Specifically, 1) we first leverage illumination priors from large-scale diffusion models to build our multi-light diffusion model on a synthetic relighting dataset with dedicated designs. This diffusion model generates multiple consistent images, each illuminated by point light sources in different directions. 2) By using these varied lighting images to reduce estimation uncertainty, we train a large G-buffer model with a U-Net backbone to accurately predict surface normals and materials. Extensive experiments validate that our approach significantly outperforms state-of-the-art methods, enabling accurate surface normal and PBR material estimation with vivid relighting effects. Code and dataset are available on our project page at https://projects.zxhezexin.com/neural-lightrig.