NORA: A Small Open-Sourced Generalist Vision Language Action Model for Embodied Tasks

Chia-Yu Hung, Qi Sun, Pengfei Hong, Amir Zadeh, Chuan Li, U-Xuan Tan, Navonil Majumder, Soujanya Poria

2025-04-29

Summary

This paper talks about NORA, a small but powerful AI model that can understand both images and language and use that understanding to perform real-world tasks, even though it uses less computer power than bigger models.

What's the problem?

The problem is that most AI models that are good at combining vision and language for things like robots or smart assistants are usually very large and require a lot of computer resources, making them hard to use on smaller devices or in situations where speed and efficiency matter.

What's the solution?

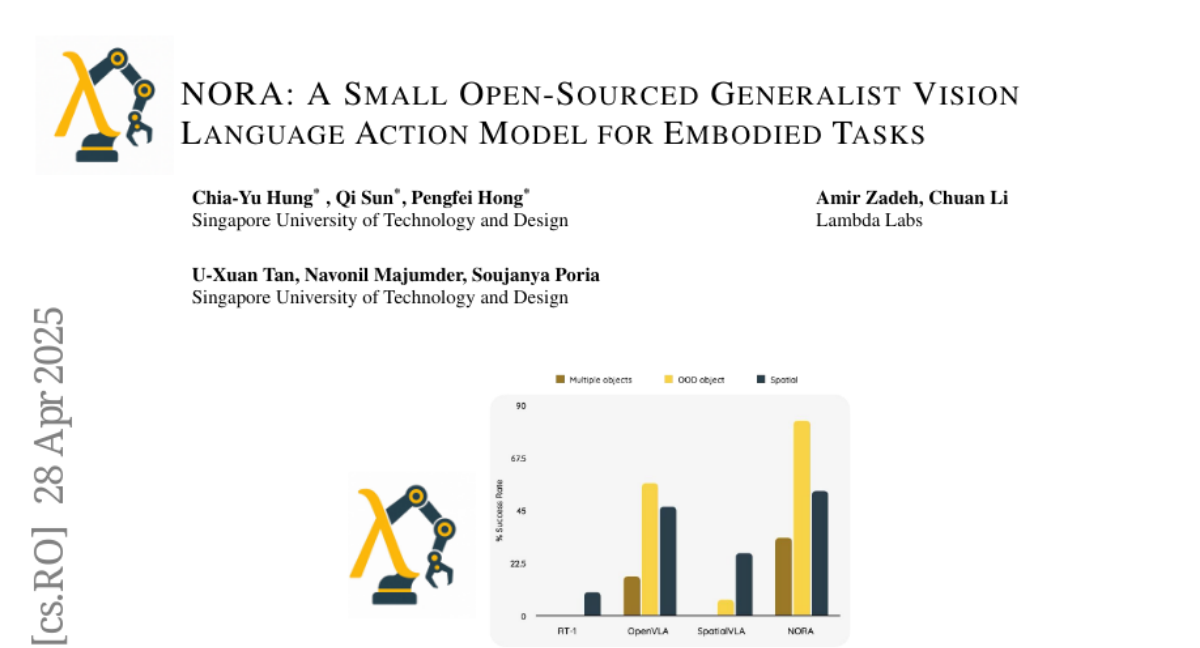

The researchers created NORA, which is a much smaller model with only 3 billion parameters, but it is still able to reason about what it sees and hears and take the right actions. They built it using a system called Qwen-2.5-VL-3B, and showed that it can even beat bigger models in some tasks, all while being faster and easier to run.

Why it matters?

This matters because it means more people and companies can use advanced AI for things like robotics, smart cameras, or interactive assistants without needing expensive hardware, making these technologies more accessible and practical in everyday life.

Abstract

NORA is a 3B-parameter VLA model using Qwen-2.5-VL-3B for efficient visual reasoning and action grounding, outperforming larger models with reduced computational overhead.