NOVER: Incentive Training for Language Models via Verifier-Free Reinforcement Learning

Wei Liu, Siya Qi, Xinyu Wang, Chen Qian, Yali Du, Yulan He

2025-05-26

Summary

This paper talks about NOVER, a new way to train language models using reinforcement learning that doesn't need any outside systems to check if the answers are good or not.

What's the problem?

The problem is that most reinforcement learning methods for improving language models rely on external verifiers, which are extra systems that judge whether the model's responses are correct. These verifiers can be slow, expensive, or sometimes unreliable, making the whole process less efficient.

What's the solution?

The researchers developed NOVER, a framework that lets the language model learn and improve its answers on its own, without needing an external verifier. This makes the training process simpler and faster, while still boosting the model's performance on different text-based tasks.

Why it matters?

This is important because it makes it easier and cheaper to build better language models, which can then be used for things like writing, answering questions, and helping people with all sorts of tasks.

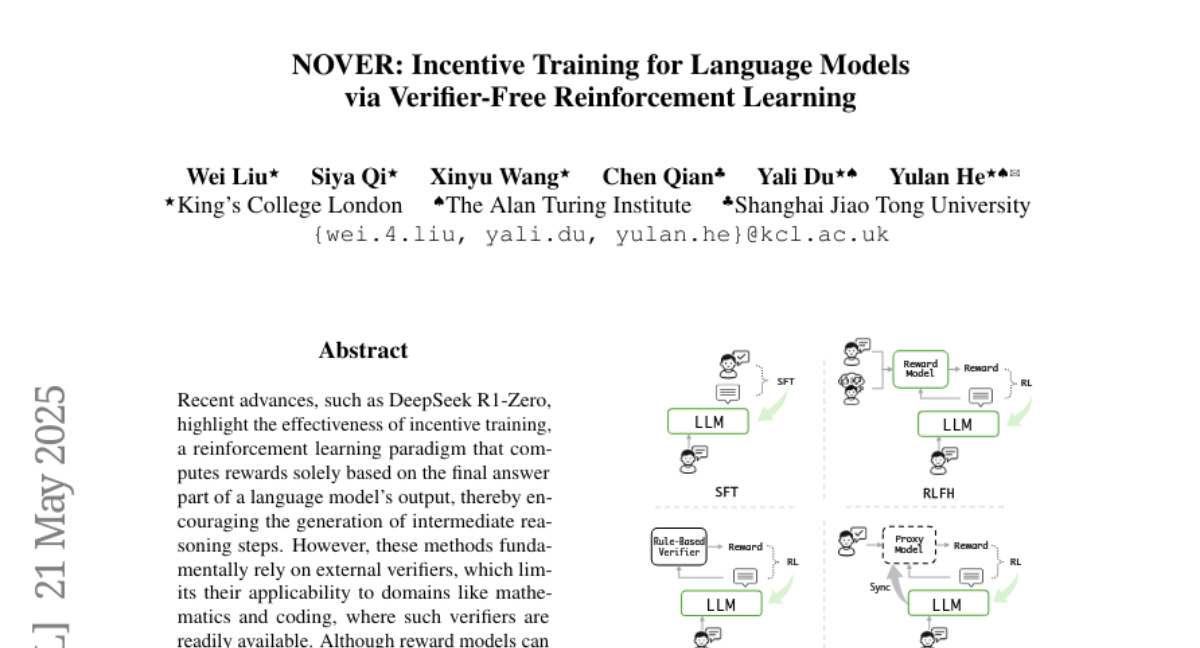

Abstract

NOVER, a reinforcement learning framework that eliminates the need for external verifiers, enhances language model performance across text-to-text tasks.