NuiScene: Exploring Efficient Generation of Unbounded Outdoor Scenes

Han-Hung Lee, Qinghong Han, Angel X. Chang

2025-03-21

Summary

This paper explores a way to create large outdoor scenes for computers to display, like castles or cityscapes, efficiently.

What's the problem?

Generating big outdoor scenes is hard because they have a lot of variety in height and need to be created quickly.

What's the solution?

The researchers developed a method that represents pieces of the scene as simple sets of data, which helps compress the information and speed up the generation process. They also trained a model to create scenes that expand outwards, making them look endless.

Why it matters?

This work matters because it could lead to faster and more efficient ways to create realistic outdoor environments for video games, movies, and virtual reality.

Abstract

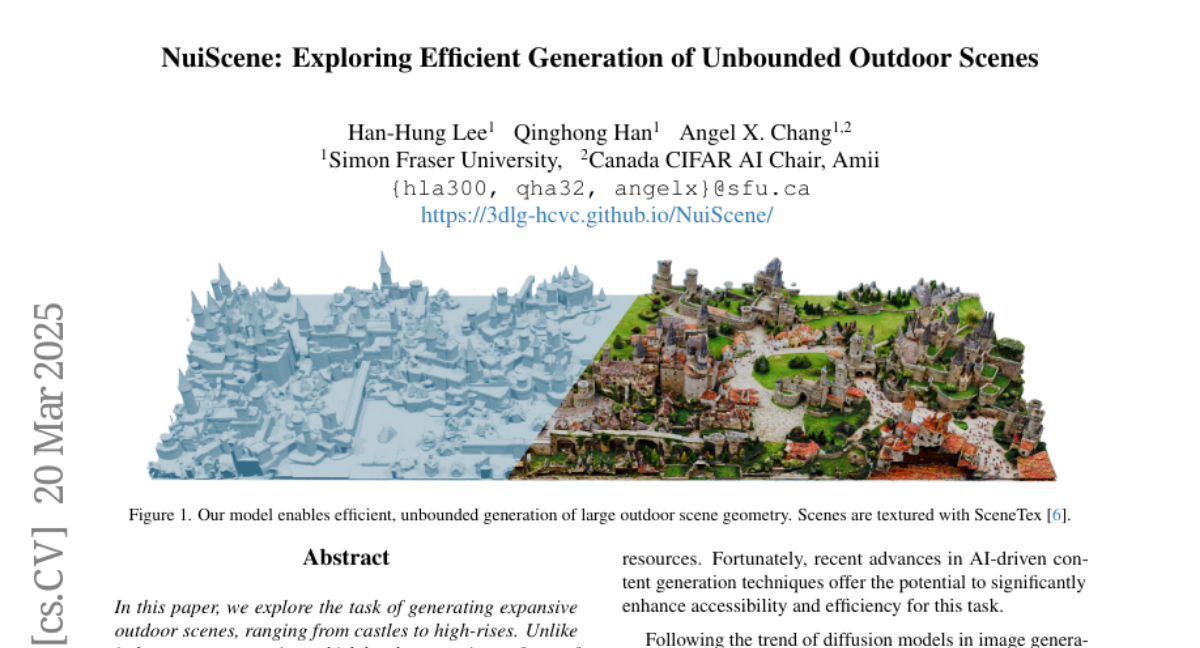

In this paper, we explore the task of generating expansive outdoor scenes, ranging from castles to high-rises. Unlike indoor scene generation, which has been a primary focus of prior work, outdoor scene generation presents unique challenges, including wide variations in scene heights and the need for a method capable of rapidly producing large landscapes. To address this, we propose an efficient approach that encodes scene chunks as uniform vector sets, offering better compression and performance than the spatially structured latents used in prior methods. Furthermore, we train an explicit outpainting model for unbounded generation, which improves coherence compared to prior resampling-based inpainting schemes while also speeding up generation by eliminating extra diffusion steps. To facilitate this task, we curate NuiScene43, a small but high-quality set of scenes, preprocessed for joint training. Notably, when trained on scenes of varying styles, our model can blend different environments, such as rural houses and city skyscrapers, within the same scene, highlighting the potential of our curation process to leverage heterogeneous scenes for joint training.