OLA-VLM: Elevating Visual Perception in Multimodal LLMs with Auxiliary Embedding Distillation

Jitesh Jain, Zhengyuan Yang, Humphrey Shi, Jianfeng Gao, Jianwei Yang

2024-12-13

Summary

This paper talks about OLA-VLM, a new method designed to improve how multimodal large language models (MLLMs) understand visual information by optimizing their internal representations using visual knowledge.

What's the problem?

Current methods for training MLLMs mainly focus on using natural language to guide learning, which isn't enough for improving their ability to understand images. This means these models might not perform well when it comes to tasks that require strong visual perception, like recognizing objects or understanding scenes.

What's the solution?

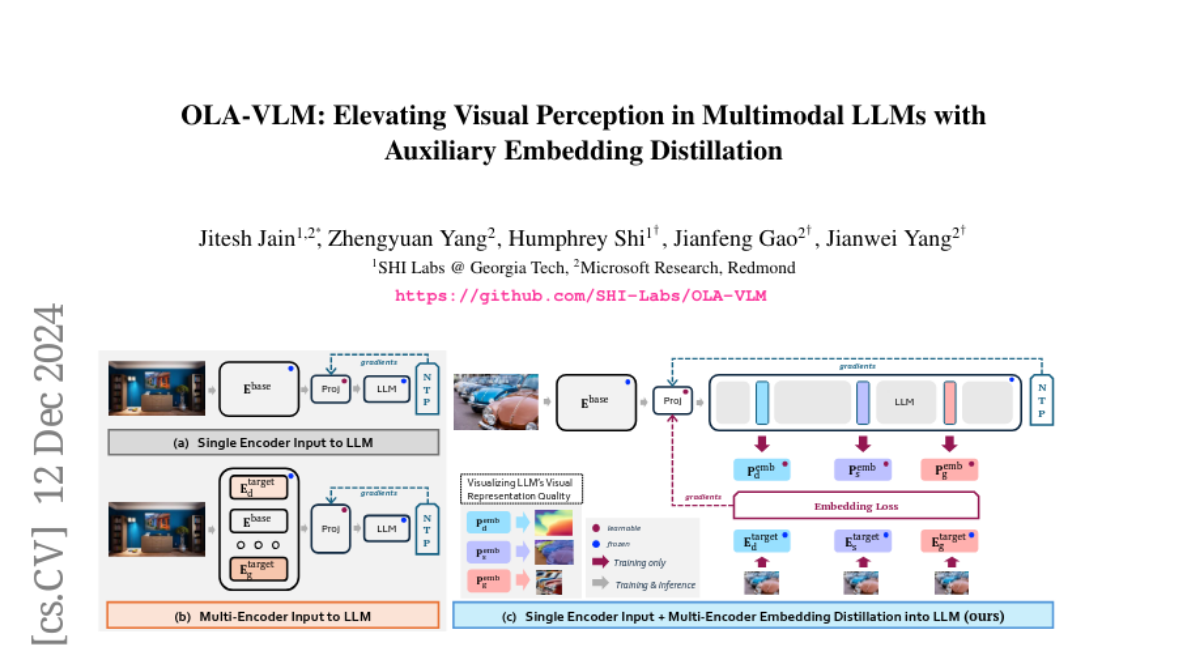

OLA-VLM addresses this issue by introducing a technique called auxiliary embedding distillation. This involves teaching the model to improve its internal representations by incorporating knowledge from visual data. The authors formulate a training objective that combines predicting visual features with generating text, allowing the model to learn better from both types of information. They found that this approach leads to higher quality visual understanding and improved performance on various tasks compared to traditional methods.

Why it matters?

This research is important because it enhances the capabilities of AI models in understanding and processing visual information. By improving how these models learn from images, OLA-VLM can lead to better performance in real-world applications such as image recognition, automated reasoning, and interactive AI systems, making them more effective tools in various fields.

Abstract

The standard practice for developing contemporary MLLMs is to feed features from vision encoder(s) into the LLM and train with natural language supervision. In this work, we posit an overlooked opportunity to optimize the intermediate LLM representations through a vision perspective (objective), i.e., solely natural language supervision is sub-optimal for the MLLM's visual understanding ability. To that end, we propose OLA-VLM, the first approach distilling knowledge into the LLM's hidden representations from a set of target visual representations. Firstly, we formulate the objective during the pretraining stage in MLLMs as a coupled optimization of predictive visual embedding and next text-token prediction. Secondly, we investigate MLLMs trained solely with natural language supervision and identify a positive correlation between the quality of visual representations within these models and their downstream performance. Moreover, upon probing our OLA-VLM, we observe improved representation quality owing to the embedding optimization. Thirdly, we demonstrate that our OLA-VLM outperforms the single and multi-encoder baselines, proving our approach's superiority over explicitly feeding the corresponding features to the LLM. Particularly, OLA-VLM boosts performance by an average margin of up to 2.5% on various benchmarks, with a notable improvement of 8.7% on the Depth task in CV-Bench. Our code is open-sourced at https://github.com/SHI-Labs/OLA-VLM .